- Submissions

Full Text

Psychology and Psychotherapy: Research Studys

Artificial Intelligence, Education and Research: Epistemological, Technopolitical Tensions and Recommendations

Cláudia Helena dos Santos Araújo*1

Federal Institute of Education, Science and Technology of Goiás, Brazil

*Corresponding author: Cláudia Helena dos Santos Araújo, Federal Institute of Education, Science and Technology of Goiás, Anápolis, GO, Brazil. Email ID: helena.claudia@ifg.edu.br

Submission: January 20, 2026;Published: February 09, 2026

ISSN 2639-0612Volume9 Issue 3

Abstract

This article is a critically reflective theoretical essay that discusses the possibilities and contradictions of Artificial Intelligence (AI), with an emphasis on large language models and generative systems in the fields of education and research. Drawing on arguments developed in public debate and in dialogue with recent documents on competencies, use and regulation, we organize a thematic reading along four axes: (i) conceptual disputes and the re-centring of AI in public debate; (ii) educational and scientific uses and their epistemic limits; (iii) tensions surrounding authorship, plagiarism and technological dependence; and (iv) biases, disinformation and regulatory challenges. We argue that accelerated adoption associated with limited institutional guidance tends to weaken human agency if there are no pedagogical mediation, governance and critical education. Finally, we propose recommendations for institutional policies and didactic-evaluative practices that promote AI literacy, academic integrity and digital sovereignty.

Keywords:Artificial intelligence; Education; Research; AI literacy; Ethics and regulation

Introduction

Artificial Intelligence (AI) technologies have a well-defined historicity dating back to the mid-twentieth century. Different strands (symbolic, statistical, connectionist) seek to automate tasks of pattern recognition, inference, prediction and data-driven decision-making. What changes in contemporary times is the scale and penetration of Generative AI (GenAI), especially Large Language Models (LLMs), whose conversational interfaces and integration into daily-use platforms have repositioned AI as a mass technology.

Discussions of technologies and AI in education and research call for conceptual and political carefulness, given that they are shaped by socio-historical determinants and intersect with labour, social, philosophical and educational domains. As Araújo and Feenberg [1] state, “we explore the intersection between technology, society and critical theory”. This framing aligns with Feenberg’s account of ‘dilemmas of development’, in which contemporary challenges are linked less to moral imperfections attributed to human nature than to mismatches between individuals’ capacities and the structural complexity of problems characteristic of technological societies [1,2].

1 PhD in Education from the Pontifical Catholic University of Goiás (2012). Postdoctoral researcher in Cultural Studies at the Federal University of Rio de Janeiro (UFRJ) (2020). Faculty member of the Graduate Program - Academic Master’s in Education (IFG) and of the nationwide Professional Master’s Program in Professional and Technological Education (ProfEPT - IFG). Researcher at the Alfredo Bosi Chair of Basic Education, Institute of Advanced Studies, University of São Paulo (IEA-USP).

Although it is often presented as a ‘novelty’, AI has a historical trajectory that dates back to the 1950s, when early learning experiments and models were consolidated [3]. The emergent character of the contemporary debate, however, stems from the recent leap in social diffusion, driven by the popularization of largescale language systems and the strengthening of laboratories and platforms. This repositioning has implications for education and research. Students and teachers may begin to write, study, program, translate, revise and search for information mediated by models that produce eloquent texts. In some pedagogical discourses, AI appears as a device for pedagogical innovation and in others, as a device for intensifying labour, standardizing curricula, enabling data surveillance and fostering dependence on platforms [4].

In this context, it becomes relevant to recognize the tension between academic production and the collaborative appropriation of knowledge. A significant part of the development that sustains current systems is linked to university ecosystems, research networks and public funding, which highlights the centrality of the university in building intellectual and technical infrastructure. At the same time, the transfer of scientific results into proprietary regimes may restrict the social circulation of knowledge and intensify technological dependencies [5]. For this reason, the defence of open science, transparency and public access emerges as a strategic dimension when analysing the conditions for producing and using AI in educational and scientific contexts.

From a conceptual standpoint, it is necessary to problematize the very term “artificial intelligence”. As Kaufman [6] suggests, the expression “artificial intelligence” is constituted by a multifaceted phenomenon shaped by intentions, interests, and disputes. Still, a critical approach does not imply refusing the debate. Discussing AI from a counter-hegemonic perspective means interrogating it as a technopolitical technology, understood as an artifact that reorganizes power relations, labour practices, regimes of truth and forms of knowledge mediation [7].

This article aims to systematize a set of arguments about the possibilities and contradictions of AI in education and research, articulating them with contemporary debate and with recent public documents related to these issues. We ask: (a) what possibilities does GenAI offer for educational and scientific practices? (b) what tensions emerge around authorship, academic integrity, and disparities? and (c) what elements of governance and regulation are necessary to guide ethically responsible uses?

This is a critical-reflexive theoretical essay using a qualitative approach, built on two complementary and articulated movements. In the first, we systematize a theoretical exposition on AI in Education and Research, organized into axes that make explicit premises, tensions, and formative implications: (i) conceptual disputes and AI as epistemic infrastructure; (ii) possibilities of pedagogical and scientific use; (iii) contradictions related to authorship, academic integrity, technological dependence and disparities; and (iv) biases, disinformation, and risks of algorithmic trust.

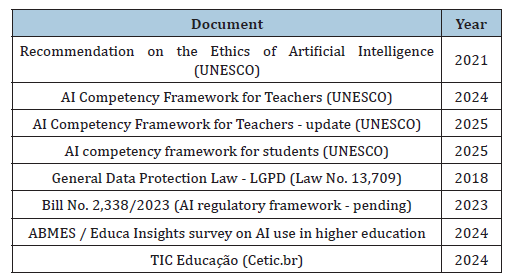

The second movement of this essay relies on a selected set of public documents on AI ethics, competencies and governance, chosen according to three criteria: (i) institutional relevance in documents from international organizations and national normative frameworks; (ii) published or updated between 2018 and 2025; and (iii) relevance to higher education and research. International frameworks such as those from UNESCO and the OECD were included, as well as references from the Brazilian context such as the General Data Protection Law (LGPD), regulatory debates and public data on use. Opinion materials without institutional grounding were excluded. This is a mapping exercise, not a systematic review, and its inferences should be read as a critical-reflexive synthesis of the contemporary debate (Table 1).

Table 1:Documents analysed.

The selection seeks to show how technology becomes a dispute over political values. As this is an essay, it does not aim to exhaust the topic nor to represent the totality of existing debates.

GenAI, Education and Research: An Epistemological Discussion

Generative AI (GenAI) is not a single model but a heterogeneous class of foundation models built with different techniques and deployed in architectures with markedly different processing and memory capacities. It mobilizes multiple techniques and materializes in architectures with different processing capacities. This diversity can be observed in publicly accessible, open-source releases. Mistral AI, for example, released Mistral 7B as a 7.3-billionparameter model under the Apache 2.0 license [8] and AI2’s OLMo initiative states that its code, weights and intermediate checkpoints are also released under Apache 2.0 [9]. Falcon-40B is likewise made available under Apache 2.0 [10], and GPT-NeoX-20B is described as an open-source language model associated with an Apache 2.0 license, showing that GenAI includes multiple open initiatives beyond proprietary platforms.

It brings together the idea of the technological, related to expanding computers capacity to perform useful tasks and the scientific idea, aimed at using AI concepts and models to understand and investigate questions about human beings and other living beings [11]. LLMs can be understood as models trained to predict sequences of tokens from large volumes of text, rather than as agents endowed with consciousness. This helps explain why they can produce plausible responses and at the same time, contain errors and biases [12].

In operational terms, AI can be described as an articulation among data, models and algorithms. This statistical-mathematical relationship influences how information is processed, prioritized and distributed, with implications for education and research. In addition, the field encompasses multiple architectures involving neural networks, machine learning, and deep learning, with an emphasis on LLMs, which underpin generative applications. LLMs are commonly trained as autoregressive next-token predictors at very large scale; for instance, GPT-3 was reported as an autoregressive language model with 175 billion parameters, trained on hundreds of billions of tokens [13]. These models produce text and multimodal content in integrated systems by estimating linguistic sequences from patterns learned during training, which explains why they can offer coherent answers while simultaneously presenting errors, gaps and biases.

The technopolitical dimension of the problem becomes even more salient when one observes that the functioning of these systems depends on platforms with cloud infrastructure, energy, processing capacity and data governance. In digital contexts, algorithmic personalization, the recurrence of recommendations after certain searches and data prediction shows how patterns of behaviour and interaction can be converted into signals for filtering, classifying and directing content, “using weight matrices learned from data” [12]. In the case of generative resources, this discussion also involves the need for transparency regarding usage logs, searches, privacy policies and supervision practices, since the asymmetry between users and platforms can increase informational vulnerabilities and social disparities.

The counter-hegemonic orientation defended in this text includes considering technological alternatives, such as resources developed in open source, as well as the geopolitical and epistemological diversification of technological references. The critical adoption of different systems outside the dominant axis of the Global North can help reduce dependencies and foster research and teaching practices articulated with principles of autonomy and critical thinking. In this sense, [14] argues that the political and economic choice for private digital platforms has reinforced the deepening of data colonialism over the Brazilian population and Brazil’s dependence on countries that concentrate infrastructures and data companies.

GenAI reorganizes academic practices of reading, writing, searching, and validating knowledge by mediating access to information through model-generated syntheses rather than direct consultation of primary sources. In this context, the risk you point to can be framed in terms already documented in the human– automation literature, where users may display automation bias, that is, inappropriate overreliance on automated outputs, including reduced independent verification when a system presents confident recommendations. At the same time, research on large language models has systematically described hallucinations as a reliability issue in LLM outputs, which helps explain why fluent responses may still contain factual errors and fabricated content [15]. For education and research settings specifically, UNESCO’s guidance on GenAI emphasizes the need for human oversight, critical evaluation and verification practices, precisely because model outputs can be mistaken for authoritative truth if they are not checked against sources [16].

A captive market tends to form, as users come to depend on these systems for decision-making, writing, and content production, a dynamic reinforced by the rapid diffusion of GenAI in academic routines. For instance, the HEPI Student Generative AI Survey 2025 reports that 88% of students used generative AI tools for assessments, indicating widespread incorporation of such systems into study and writing practices. The OECD similarly notes that much GenAI is widely accessible and often used beyond institutional control due to its versatility, which helps explain why reliance can scale quickly once these tools become integrated into everyday academic workflows. Added to this is a neoliberal rationality that shifts responsibility for verification and selfregulation onto the individual [17]. In the case of research and educational science, this generates de-intellectualization and a rupture with critical, scientifically produced knowledge, a concern consistent with empirical studies linking intensive GenAI use to outcomes such as increased procrastination and self-reported memory loss in student populations.

It is observed that there are many challenges and possibilities, as well as contradictions and recommendations regarding GenAI in education and research, seeking to show how this mediation reorganizes practices of reading, writing, validating, and evaluating knowledge. GenAI operates through the reorganization of patterns and represents support for idea formulation, synthesis, translation, and argumentative organization. At the same time, challenges emerge such as the naturalization of model outputs, errors, and the reduction of intellectual work to the final result produced by the model. This requires certain understandings, such as the type of knowledge that comes to circulate through AI systems, as well as the type of mediation in the algorithmic system. This scenario leads us to recommend AI literacy in order to establish ethical limits in modes of use, with an emphasis on supervision, traceability and understanding how the system produces answers.

The supervision is understood as monitoring the use of GenAI system, including its objective, the construction and final product generated by the prompt (command), the verification of the response, the validation of sources and human accountability for the responses and subsequent use. Traceability is understood as the capacity to document and reconstruct the path of knowledge production, recording prompts, the parameters and versions of the resource, verification steps, references and justifications for changes. And by response production by the GenAI system we understand its constitution through a statistical-mathematical relationship involving the equation of data, model and algorithm. This response-production process involves statistical issues and the probability of a text generated through patterns learned in training data [12].

The pedagogical and scientific dimension of GenAI uses depends on conditions of mediation, since it can represent a pedagogical resource or a mode of scientific fragility, depending on modes of use and appropriation. Among the possibilities, support for learning, assessment formats, the generation of examples, support for programming, the drafting of preliminary versions and initial indications of literature stand out. An important point of tension is that searching for initial indications of literature cannot represent the theoretical foundation of the text nor be the path of the literature review, considering the totality of the scientific process of conducting bibliographic surveying. However, it can be a supporting resource for the process of searching, reading, and systematizing literary findings.

The ABMES/Educa Insights survey [18] reports 29% daily use and 42% weekly use, totalling 71% of respondents who use AI frequently in study routines. In basic education, TIC Educação reports that seven in ten upper-secondary students who use the internet already use generative AI for school research, while only 32% say they received school guidance on how to use it. This need for mediated use is consistent with the recommendation that there is an “Recognizing that AI resources have uncertain potential in educational processes, presenting challenges and benefits for social development, it is important to foster their development while also considering the strict need for this development to be managed and carefully supervised by human agents who are responsible and committed to the goals of education” [5].

The tensions include the depleting the formative process, the homogenization of styles, dependence on proprietary platforms, the weakening of open science and distancing from intellectual autonomy. In practice, this is evidenced in literature reviews increasingly conducted through mediations carried out on private platforms: instead of bibliographic surveying in specialized databases and public infrastructures, such as the CAPES Journal Portal, the use of generative AIs expands to locate, synthesize and select what will be read and cited. The consequence is not only methodological, but political.

This shift may reconfigure practices of searching, selecting, and synthesizing literature, displacing part of the research work to GenAI systems. No causal nexus is claimed here between the use of generative AIs and the retrenchment of public investments. What is evident is a convergence of trends in a context with budget constraints and disputes over priorities. When private solutions come to occupy the place of a “gateway” to scientific information, the risk of devaluing public infrastructures for knowledge access grows. In the case of the CAPES Journal Portal, the budget allocation fell from R$ 546.3 million (2023) to R$ 462.1 million (2024) [19].

It is important to question under what conditions AI contributes to the teaching and learning process and to research. Recommendations point to planning by objectives, guidance on prompts as didactic strategies, as well as the need for justifications, successive versions of uses (usage history) and continuous supervision by the user [20].

With regard to authorship and academic integrity, the challenge is not reduced to prohibiting or allowing, but to systematizing criteria for authorship and evaluation in a context of mediation with algorithmic resources [21]. It is possible to conduct research using GenAI with transparency of use, the development of learning, and evaluation supported by a process of argumentation. Conversely, challenges grow such as plagiarism, the use of AI without due disclosure, false references, diluted authorship, superficial texts, the absence of human marks and exclusions arising from disparities in access and in the quality of resources and infrastructure. This context translates into problematizations such as questioning authorship in texts that used AI and the validity of the text that reports its use. In this sense, the composition of institutional policies with guidelines on integrity is indicated, including declarations of use and demonstration of the process of use [5].

The accelerated adoption of GenAI also results in technological dependencies and disparities such as access, infrastructure and literacy. Such elements are defined by use value and exchange value, that is, the operation of capital as a function of processing capacity, data availability, model domain and control of cloud and energy on platforms. Feenberg (2019) calls this social design, which represents a distinct political configuration of technology, in which decisions about design and control also distinguish power and restrict alternatives for democracy. That is, debate on a democratic agenda for the use of GenAI in all sectors of society is necessary.

Discussion about a democratic agenda that highlights the political characteristic of GenAI systems includes the economic captures that occur via data gathering, regardless of the GPT models developed and the infrastructure licensed for such. That said, it is relevant to mention the capitalism of users’ data abduction [4]. This claim is illustrated by Murgia’s (2025) idea that one way to detect predictive data patterns is to show AI millions of labelled examples, requiring humans to annotate such data one by one so that supercomputers can carry out their analysis.

Another dimension that aggravates the absence of transparency is the difficulty of traceability and of attributing authorship in AImediated development environments. Recent reports indicate that, in engineering teams, there is a growing shift from the work of writing code to reviewing, guiding, and being accountable for code generated by AI systems, which reconfigures the chain of responsibilities and makes supervision of the software production process more complex. In public statements, Anthropic CEO Dario Amodei stated that, in many teams, AI already accounts for around 90% of the code produced, with engineers mainly guiding, reviewing, and supervising the remainder [22].

On the one hand, there is an increase in productivity in contexts with support and a reduction on linguistic and operational barriers. On the other hand, a double exclusion intensifies - of surveillance and data extraction - along with the tendency to making teachers’ and researchers’ work precarious. AI’s use in Higher Education Institutions needs to be guided by control of infrastructure, data and terms. It is recommended that their research guidelines include the evaluation of open alternatives and data protection as a requirement.

Such protection is necessary even to question accelerated adoption. Biases and disinformation, in turn, are not errors, but data, objectives and social structures. It is recommended that GenAI be used as support for critical reading, comparing versions, identifying gaps and mapping biases through supervision of use and the integration of education and AI literacy. However, it can reproduce stereotypes, reinforce discrimination and amplify fake news. It is important to guide the user in problematizing biases, forms of checking, and the format of production and not only consumption.

For this reason, the problem constituted in this scenario shifts from fascination with AI to the existing conditions of mediation. That is, what knowledge circulates, its assumptions, the data that feed the models, and the control of the infrastructure of platforms, APIs, clouds and data centres that sustain textual and imagistic production. In education, this requires AI literacy that considers pedagogical and technical foundations and critical reading grounded in Critical Theory of Technology [5], (Feenberg, 2019), considering ethics, rights, existing disparities and themes of environmental sustainability.

Educational and Scientific Possibilities: Uses, Epistemic Limits and Scenarios of Institutional Guidance

Among the possibilities, uses that support study and research stand out, such as organizing ideas, developing writing outlines, suggesting readings, revising language, generating examples, simulating debates, supporting programming and conducting exploratory analysis. In the classroom, such resources can foster learning when guided by objectives, teacher mediation and assessment criteria that value process, argumentation and authorship.

The diffusion of these uses is accelerated. In higher education, a survey conducted by ABMES in partnership with Educa Insights (July 2024) indicated frequent AI use by 71% of the students interviewed, combining daily and weekly use, with emphasis on study routines and the answering questions. In upper secondary education, results released by Cetic BR [23] indicate that 70% of students already use AI, but only 32% received school guidance on how to use it. The data suggest rapid diffusion, however unequal and with low levels of school mediation.

In academic research, AI can assist in stages such as literature review, text synthesis and drafting. However, such use is not equivalent to scientific rigor involving problem formulation, methodological choices, interpretation and authorial responsibility, since these characteristics remain human. The ethical and responsible adoption of GenAI involves transparency about use through disclosure, source verification, fact-checking and understanding the system’s limitations.

Considering the documents and guideline-based contribution that has been built, this scenario is briefly presented for education and research. This scenario constitutes important elements that support the discussion of GenAI in teaching, learning and scientific practices, considering theoretical foundations, ethical-normative frameworks, Brazilian legislation and reports on infrastructure and political economy.

In the field of competencies and training, two UNESCO references stand out. The AI Competency Framework for Teachers [24] aims to discuss teacher education in AI across technical, ethical and pedagogical dimensions. The AI Competency Framework for Students [25] supports an understanding of AI literacy as critical formation by emphasizing agency, responsibility and students’ understanding of challenges.

The Recommendation on the Ethics of AI (UNESCO, 2021) presents a governance and ethics approach, indicating the need to ground principles and institutional guidelines. The OECD AI Principles (2019) function as intergovernmental principles for trustworthy AI, considering transparency, accountability and the protection of rights in recommendations and parameters for use.

In the Brazilian context, the LGPD (Law No. 13,709/2018) is a legal framework for discussing privacy, data processing and the risks associated with phantomization and the outsourcing of digital services in education and research. Bill No. 2,338/2023 (pending) makes it possible to situate the national regulatory debate as a contested field, offering accountability and governance under construction to analyse tensions among innovation, rights protection and economic interests.

The publication [26] contributes to systematizing the Brazilian educational scenario regarding students’ AI use and forms of school guidance. The sectoral study “AI in Education” (2025) expands the diagnosis by presenting conditions of infrastructure, uses, perceptions and risks, offering a basis to discuss disparities and governance challenges.

On the regulatory front, Brazil has advanced the debate on Bill No. 2,338/2023, called the AI legal framework, approved in the Senate in December 2024 and forwarded to the Chamber of Deputies in 2025, where it is being processed in a Special Committee and remains subject to plenary consideration. The text proposes principles and governance mechanisms guided by the centrality of the human person, transparency, and responsibility.

In the educational field, in addition to the LGPD (Law No. 13,709/2018), competency-oriented frameworks gain relevance. UNESCO provides AI competency frameworks for teachers and students, emphasizing human agency, ethics, sustainability, AI fundamentals and pedagogical dimensions. It is therefore recommended that systems and institutions develop policies on transparency, disclosure of use, academic integrity, data protection, teacher education and assessment practices consistent with a critical pedagogy.

These documents and data configure a GenAI scenario still in dispute, which seeks to guide competencies, ethics, and regulation. However, when transposed into the routines of teaching and research, concrete contradictions emerge, especially regarding authorship, traceability, biases, dependence and academic integrity.

Contradictions: Biases, Authorship, Plagiarism, Dependence and Disinformation

The same technology that signals possibilities also intensifies contradictions. One of them is the tension surrounding AI uses in education and research. Students may resort to AI to produce answers without developing the elaboration process, hollowing out the formative dimension of academic work. This calls for assessment practices consistent with the current scenario, through activities that require versions, justifications, reflections, source traceability and peer validation.

Another contradiction is technological dependence. With the concentration of models and infrastructures in a few large technology companies and Global North countries, pedagogical and scientific decisions may be externalized to platforms. This touches on digital sovereignty and leads us to question standards, limits of use, privacy terms and costs. Institutions need to discuss guidelines, protocols, open alternatives and governance in order to preserve human agency and autonomy, as Trinca [27] argues when stating that it is “essential to have a national AI, with a public base, in free/open-source software and open models.”

Social and educational disparities tend to widen when only paid versions, stable connectivity and AI literacy repertoires function as performances for obtaining competitive advantages. UNESCO states that GenAI systems in education can increase disparities in access to technology and educational resources (2023). Adoption without institutional guidance and without adequate infrastructure can increase the exclusion of people without full access to digital technologies. In the Brazilian case, evidence from Cetic.br/NIC. br shows an expansion of access alongside the persistence of inequalities in connectivity conditions and in the use of digital resources in schools [23]. In addition, TIC Educação indicates GenAI use by students and highlights the problem of low mediation/ guidance, which tends to widen learning disparities [28].

This illustrated context highlights biases and warns about the construction of realities by systems that combine language and historical data, potentially reproducing prejudices in the generation of texts, images, videos, among others. Biases reflect social structures and choices in data, curation, and optimization objectives. In the Brazilian context, marked by the circulation of disinformation, the issue becomes even more urgent. As [29] point out, disinformation “takes on new contours with Generative Artificial Intelligence (GenAI),” and education needs to consider “algorithms in the amplification of biases, in the creation of deepfakes and in informational bubbles” [30]. That is, in a GenAI scenario, the capacity to produce synthetic texts and images with an appearance of truthfulness increases, raising risks of manipulation.

Thus, critical education needs to include: (i) source checking and triangulation; (ii) understanding how models generate responses (probabilities, limits and “hallucinations”); (iii) a technopolitical reading of biases and disparities; and (iv) ethics of use, with respect for privacy, copyright, and data protection [31-36].

Conclusion

GenAI is already integrated into educational and scientific everyday life. The question, therefore, is not whether it will be used, but how, by whom and in the service of which projects. By articulating public debate, literature and normative frameworks, we show that possibilities such as support for learning, writing, and research go hand in hand with contradictions such as authorship, dependence, disparities and biases.

Evidence discussed in this essay reinforces the urgency of institutional mediation. Surveys indicate rapid diffusion of GenAI in higher education, with 71% of students reporting frequent use [18] and similarly high use in upper secondary education, where seven in ten students report using GenAI for school research while only 32% report having received school guidance [26]. In this context, the risks of automation bias and the production of fluent but unreliable outputs documented in the literature [15] make verification, transparency and traceability central to educational and research practices aligned with academic integrity [16].

The institutional response must be pedagogical and political: to prepare teachers and students for critical agency, to establish rights-oriented governance, and to recognize the materiality of technology. More than learning “prompts,” the task is to build AI literacy as a dimension of human formation, capable of contesting meanings and sustaining educational projects committed to social justice, intellectual emancipation and digital sovereignty. This orientation is consistent with the recommendation that it is important to foster [AI] development while also considering the strict need for this development to be managed and carefully supervised by human agents, committed to educational goals [5].

Higher Education Institutions cannot limit themselves to publishing results. They also have a responsibility to strengthen the public-good ecosystem that open science constitutes. In doing so, they preserve the conditions that train talent and enable the production of knowledge that sustains and drives advances in GenAI. At the same time, as open and publicly accessible initiatives demonstrate, institutional strategies can include evaluating open-source alternatives alongside proprietary platforms, thereby reducing technological dependence and supporting more transparent research and teaching ecosystems [8,9].

Finally, the challenge remains of articulating innovation with rights through guidelines and training. Public and institutional frameworks can guide curricula, teacher education and usage policies, but there are tensions such as fragile regulation and the data capture by market interests. Thus, an institutional AI policy is recommended that makes explicit parameters for data, uses, assessment and academic integrity. This includes parameters for data protection and procurement under the LGPD (Law No. 13,709/2018), acceptable uses and disclosure requirements, assessment practices that value process and authorship, and clear standards for academic integrity, verification, and traceability (UNESCO, 2021; UNESCO, 2023; PL nº. 2,338/2023).

References

- Araújo Santos CH, Feenberg Andrew (2024) Dialogues with Andrew Feenberg: Technology, education and artificial intelligence. Revista Tecnologia Educacional 242: 6-18.

- Feenberg Andrew (2002) Beyond the dilemma of development. In: Feenberg Andrew (2nd edn), Transforming Technology: A Critical Theory Revisited, Oxford University Press, New York, USA.

- Dartmouth college (2026) Artificial Intelligence (AI) Coined at Dartmouth.

- Zuboff S (2022) Surveillance capitalism or democracy? the death match of institutional orders and the politics of knowledge in our information civilization. Organization Theory.

- Evangelista RA, Leonardo RC (2025) Between technique and politics: Artificial intelligence and higher education: Interview with Prof. Dr. Rafael Evangelista. Revista Docência do Ensino Superior 15: 1-19.

- Kaufman D (2022) Demystifying artificial intelligence. In: (2nd edn), Autêntica, Belo Horizonte, Brazil.

- Fernandes J, Araújo CH (2026) Generative artificial intelligence and pedagogical-didactic work: A critical analysis of AI gen in the educational context. Journal of Interdisciplinary Studies 8(1): 1-14.

- Mistral AI (2023) Mistral 7B.

- Allen Institute for AI (AI2) (2024) OLMo: Open Language Model.

- TII (Technology Innovation Institute) (2025) Falcon-40B (tiiuae/falcon-40b). Hugging Face.

- Margaret AB (2020) Artificial intelligence: A very brief introduction. Translation: Fernando Santos. Unesp Publishing Foundation, São Paulo, Brazil.

- Aline P, Daniela V, Jessica R (2024) Language models. In: Caseli Helena de Medeiros, Nunes Maria das Graças Volpe (Eds.), Natural Language Processing: Concepts, Techniques and Applications in Portuguese. (2nd edn), BPLN.

- Tom BB, Benjamin M, Nick R, Melanie S, Jared K, et al. (2020) Language models are few-shot learners. In: Advances In Neural Information Processing Systems (NeurIPS).

- Joyce S (2021) Artificial intelligence, predictive algorithms and the advance of data colonialism in Brazilian public health. In: Francisco CJ, Joyce S, Amadeu da SS (Eds.), Data Colonialism: How the Algorithmic Trench Operates in the Neoliberal War, Literary Autonomy, São Paulo, Brazil.

- Lei H, Weijiang Y, Weitao M, Weihong Z, Zhangyin F, et al. (2025) A survey on hallucination in large language models: principles, taxonomy, challenges and open questions. ACM Transactions on Information Systems.

- UNESCO (2023) Guidance for generative AI in education and research. Paris, France.

- Pierre D, Christian L (2016) The new reason of the world: An essay on neoliberal society. Translated by Mariana Echalar. Boitempo, São Paulo, Brazil.

- Associação Brasileira De Mantenedoras De Ensino Superior (ABMES), Educa Insights (2024) Artificial intelligence in higher education, Brazil.

- CAPES, Budget - Evolution in reais 2015-2025.

- Araújo Cláudia HS (2025) AI in education and research: Possibilities and contradictions. Oral communication (lecture).

- Caroline RL, Longhinotti FM (2025) Academic integrity in the ChatGPT era: Ethical challenges and the new frontiers of innovation. Brazilian Journal of Professional and Technological Education 3(25): e17803.

- Katherine LI (2025) Anthropic CEO says 90% of code written by teams at the company is done by AI - but he's not replacing engineers just yet. Business Insider.

- Cetic BR, Nic BR (2025) Artificial intelligence in education: Uses, opportunities and risks in the Brazilian context.

- UNESCO (2024) AI competency framework for teachers. Paris, France.

- UNESCO (2025) AI competency framework for students. Paris, France.

- Cetic BR (2025) ICT Education 2024: Complete book. Brazilian Internet Steering Committee (CGI.br), São Paulo, Brazil.

- Mayra T (2025) Indiscriminate use of AI threatens academic training and digital sovereignty.

- Cetic BR, Nic BR (2025) ICT Education 2024: 70% of high school students use AI, but only 32% have school guidance.

- Azevedo CM, Helena dos SA, Soares e SB, Carolina VL (2025) Disinformation, artificial intelligence and education: Epistemic challenges and formative proposals for a critical curriculum. Pedagogical Notebook 22(12): e20667.

- Stuart JR, Peter N (1995) Artificial intelligence: A modern approach. In: (1st edn), Englewood Cliffs, Prentice Hall, NJ, USA.

- Agência Brasil (2024) Seven out of ten students use AI in their study routine. Rio de Janeiro.

- Agência Brasil (2024) Nearly 90% of Brazilians admit to having believed in fake news.

- Deputados CD (2025) Proposal: Bill 2.338/2023 - Processing Information. Chamber of Deputies, Brazil.

- Maslej N (2023) The AI index report 2023. Stanford HAI, Stanford, California, USA.

- OECD (2024) Data centres and data transmission networks. OECD, Paris, France.

- Senado Federal (2024) Senate approves regulation of artificial intelligence; text goes to the chamber of deputies.

© 2026 Cláudia Helena dos Santos Araújo, This is an open access article distributed under the terms of the Creative Commons Attribution License , which permits unrestricted use, distribution, and build upon your work non-commercially.

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

.jpg)

Editorial Board Registrations

Editorial Board Registrations Submit your Article

Submit your Article Refer a Friend

Refer a Friend Advertise With Us

Advertise With Us

.jpg)

.jpg)

.bmp)

.jpg)

.png)

.jpg)

.jpg)

.png)

.png)

.png)