- Submissions

Full Text

COJ Robotics & Artificial Intelligence

Bias, Fairness, and Ethical Accountability in Artificial Intelligence: A Human Centered Perspective on Algorithmic Decision-Making

Rahul Jain1*, Harsh Nagar1, Om Prakash Pal2, Abhishek Teraiya1, Parth Shah1 and Yash Vasoya1

1Department of Computer Engineering, Marwadi University, India

2Department of CSE, Graphic Era (Deemed to be University), India

*Corresponding author: Rahul Jain, Department of Computer Engineering, Marwadi University, India

Submission: January 10, 2026;Published: March 05, 2026

ISSN:2832-4463 Volume5 Issue2

Abstract

As AI systems increasingly influence critical decisions in sectors like healthcare, recruitment, finance, and criminal justice, concerns around bias and fairness have intensified. Although often viewed as neutral, AI can unintentionally replicate or amplify social inequalities due to biased training data, narrow performance metrics, or limited contextual design-disproportionately impacting marginalized communities. This study underscores that AI ethics is fundamentally a human issue affecting dignity, trust, opportunity, and justice. By examining fairness frameworks and real-world cases of algorithmic bias in hiring, healthcare, and predictive policing, it reveals how well-meaning systems can produce harmful outcomes. The paper also critiques existing mitigation strategies for addressing only surface-level issues while overlooking deeper structural inequities. It advocates for a human-centered AI approach that emphasizes transparency, inclusivity, stakeholder participation, continuous auditing, explainability, and accountability. Ultimately, the study calls for aligning AI development not just with technical capability, but with core human values such as fairness, responsibility, and equity to ensure just and sustainable societal impact.

Keywords:AI ethics; Algorithmic bias; Fairness in AI; Ethical decision-making; Human-centered AI; Responsible AI; Machine learning fairness; Data bias; Algorithmic accountability; Transparency in AI; Social impact of AI; Inclusive AI systems; Justice in AI; AI governance; Moral responsibility in AI

Introduction

Motivation

Artificial Intelligence (AI) systems are increasingly mediating access to employment, credit, healthcare and justice. In many cases, decisions that once relied on human judgment are now determined by machine learning models trained on vast datasets. From AI-driven hiring tools and automated loan approvals to predictive policing and healthcare risk assessments, algorithmic systems operate at unprecedented scale and speed. While these systems promise efficiency, consistency, and objectivity, real-world evidence reveals a troubling pattern: AI frequently reproduces and amplifies existing societal inequities. High-profile cases-such as biased recidivism prediction systems, discriminatory lending algorithms, and unequal healthcare risk scoring-demonstrate that algorithmic decision-making can generate tangible harm, particularly for historically marginalized populations. The growing reliance on AI in high-stakes contexts therefore presents an urgent ethical challenge. As algorithmic governance expands, ensuring fairness, transparency, and accountability in AI systems is no longer optional-it is essential for safeguarding human dignity and social justice.

Problem statement

Despite widespread assumptions that AI systems are inherently objective, they are deeply shaped by the data on which they are trained, the optimization objectives they pursue and the design choices embedded by developers. Bias in AI arises through multiple pathways:

A. Data bias, reflecting historical discrimination and

representation gaps.

B. Model bias, emerging from algorithmic design and

optimization trade-offs.

C. Societal bias, where structural inequities become encoded

into computational systems.

These biases manifest across critical domains:

A. Hiring systems that disadvantage women or minority

candidates.

B. Facial recognition systems with higher error rates for

people of color.

C. Healthcare algorithms that underestimate medical needs

of marginalized communities.

D. Financial models that systematically restrict access to

credit.

Moreover, defining “fairness” itself presents theoretical and practical dilemmas. Competing fairness definitions such as demographic parity, equal opportunity and individual fairnessoften conflict mathematically, making it impossible to satisfy all simultaneously. This creates a complex landscape where technical performance, ethical principles and regulatory accountability intersect yet often remain misaligned.

3.3. Research objectives

This paper seeks to systematically examine the ethical, technical, and regulatory dimensions of algorithmic bias in AI systems. The primary objectives are:

a. To analyze the mechanisms through which bias emerges

and propagates in AI systems, including data collection, labeling

practices, and model optimization.

b. To critically evaluate competing fairness definitions,

identifying their theoretical foundations, trade-offs, and

limitations in high-stakes contexts.

c. To situate algorithmic bias within broader ethical

frameworks, including utilitarian, deontological, virtue-based,

and care-oriented perspectives.

d. To assess current mitigation strategies, spanning technical

debiasing methods, organizational governance mechanisms,

and regulatory interventions.

e. To examine sector-specific case studies, particularly in

healthcare, criminal justice, finance, and employment.

f. To identify future challenges and governance pathways

for developing responsible, transparent, and accountable AI

systems.

Through this structured inquiry, the paper aims to bridge the gap between computational design and societal consequence.ecurring patterns and their connections to implementation processes.

Contributions

This manuscript offers a distinctive contribution by bridging the gap between technical analyses of algorithmic bias and broader ethical as well as social justice perspectives, thereby enabling a more holistic understanding of fairness in AI systems. It presents a comparative evaluation of prominent fairness definitions, drawing attention to their inherent incompatibilities and the practical tradeoffs they impose in real-world implementation. Moving beyond abstract theory, the study delivers a domain-specific investigation of bias in high-stakes environments, with particular emphasis on healthcare AI systems where algorithmic decisions can have life-altering consequences. In addition, it critically examines existing governance structures, including regulatory frameworks, organizational practices, and explainability mechanisms, to assess their effectiveness in mitigating systemic inequities. By grounding technical insights in the lived experiences of impacted populations, the manuscript adopts a human-centered lens that underscores the societal implications of automated decision-making. Through the integration of computational processes with ethical and institutional realities, this work advances a multidisciplinary perspective on the development of responsible and accountable AI systems.

Systematic Review Methodology

Review design and framework

This study adopts a Systematic Literature Review (SLR) methodology to synthesize scholarly evidence on algorithmic bias, fairness in Artificial Intelligence (AI), data-driven discrimination, mitigation strategies, and governance mechanisms. The review follows the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA 2020) framework to ensure transparency, replicability, and methodological rigor.

The objective of this systematic review is to:

1. Identify key sources of algorithmic bias across domains.

2. Examine competing definitions of fairness in machine

learning.

3. Evaluate empirical evidence of bias in healthcare, finance,

employment, and criminal justice.

4. Assess technical and organizational mitigation strategies.

5. Analyze governance, accountability, and regulatory

frameworks.

This review is qualitative in nature, as the included studies differ substantially in methodologies, datasets, and evaluation metrics, precluding formal meta-analysis.

Information sources and search strategy

A structured literature search was conducted across the

following academic databases:

a) ACM Digital Library

b) IEEE Xplore

c) SpringerLink

d) ScienceDirect (Elsevier)

e) Google Scholar (for supplementary cross-verification)

The search was conducted between January 2024 and January 2026.

Search keywords

The search string combined Boolean operators and domainspecific

terminology:

(“algorithmic bias” OR “AI bias” OR “machine learning fairness”)

AND (“healthcare” OR “criminal justice” OR “finance” OR

“hiring”)

AND (“explainability” OR “transparency” OR “mitigation” OR

“regulation”)

Additional backward and forward citation tracking was

performed to identify highly cited foundational works.

Eligibility Criteria

Inclusion criteria: Studies were included if they met the

following criteria:

A. Peer-reviewed journal articles or major conference

proceedings (ACM, IEEE, Springer, Nature, Science).

B. Published between 2012 and 2026.

C. Focused explicitly on algorithmic bias, fairness definitions,

AI governance, or mitigation strategies.

D. Provided empirical, theoretical, or framework-based

contributions.

E. Addressed high-stakes domains such as healthcare,

finance, employment, or criminal justice.

Exclusion criteria: Studies were excluded if they:

A. Were non-peer-reviewed opinion pieces or blog articles

(except landmark investigative reports).

B. Focused solely on general AI performance without

fairness or bias relevance.

C. Lacked methodological transparency.

D. Addressed non-AI-related discrimination.

Study selection process

The study selection followed the PRISMA four-stage screening process:

a) Identification: 52 records were initially identified across

databases and reference lists.

b) Screening: After removal of duplicates and non-scholarly

sources, 46 records remained.

c) Eligibility: Full-text assessment excluded three articles

due to insufficient methodological rigor or limited relevance.

d) Inclusion: A total of 43 studies were included in the

qualitative synthesis.

Two independent reviewers screened titles, abstracts, and full texts. Disagreements were resolved through discussion and consensus to reduce selection bias.

Data extraction and coding

A structured data extraction template was developed to ensure consistency. The following information was recorded for each study:

A. Author(s) and publication year

B. Domain of application

C. Type of bias examined

D. Fairness definition (if applicable)

E. Methodological approach (empirical, theoretical,

experimental)

F. Mitigation strategy (if proposed)

G. Governance or regulatory implications

A. Author(s) and publication year

B. Domain of application

C. Type of bias examined

D. Fairness definition (if applicable)

E. Methodological approach (empirical, theoretical,

experimental)

F. Mitigation strategy (if proposed)

G. Governance or regulatory implications

Thematic coding was conducted manually using qualitative synthesis techniques.

Quality assessment

To assess methodological robustness, studies were evaluated

using adapted quality criteria based on established SLR standards:

a) Clarity of research question

b) Transparency of dataset description

c) Reproducibility of methods

d) Statistical or theoretical rigor

e) Practical relevance

Studies were categorized as high, moderate, or exploratory quality. All 43 selected studies met minimum quality thresholds.

Data synthesis approach

Given heterogeneity in methodologies and domains, a narrative

synthesis approach was adopted. The synthesis focused on:

1. Identifying recurring bias mechanisms across domains

2. Comparing fairness definitions and their incompatibilities

3. Evaluating mitigation effectiveness and trade-offs

4. Examining regulatory enforcement gaps

5. Linking technical findings with sociotechnical frameworks

Cross-domain comparisons were conducted to identify structural commonalities in algorithmic bias.

Limitations of the review

Several limitations should be acknowledged:

a. The review is restricted to English-language publications.

b. Quantitative meta-analysis was not feasible due to

heterogeneous methodologies.

c. Rapidly evolving AI technologies may render some

findings temporally sensitive.

d. Some industry bias mitigation results lack full

transparency due to proprietary constraints.

Despite these limitations, the review provides a comprehensive synthesis of contemporary scholarship on algorithmic bias and fairness in AI.

Ethical considerations

As this study analyses publicly available scholarly literature, no human subjects or primary data collection were involved. Ethical approval was therefore not required. This systematic review applies PRISMA 2020 standards to rigorously synthesize interdisciplinary research on algorithmic bias. By integrating theoretical foundations, empirical case studies, mitigation strategies, and governance analyses, the methodology ensures a structured and replicable examination of fairness challenges in contemporary AI systems.

Literature Review

Review objective

To systematically synthesize peer-reviewed and high-impact

scholarly literature examining:

a. Sources and mechanisms of algorithmic bias

b. Fairness definitions and trade-offs

c. Data-driven bias in healthcare and finance

d. Governance, legal, and accountability frameworks

e. Bias mitigation strategies

f. Explainability and transparency mechanisms

PRISMA flow summary

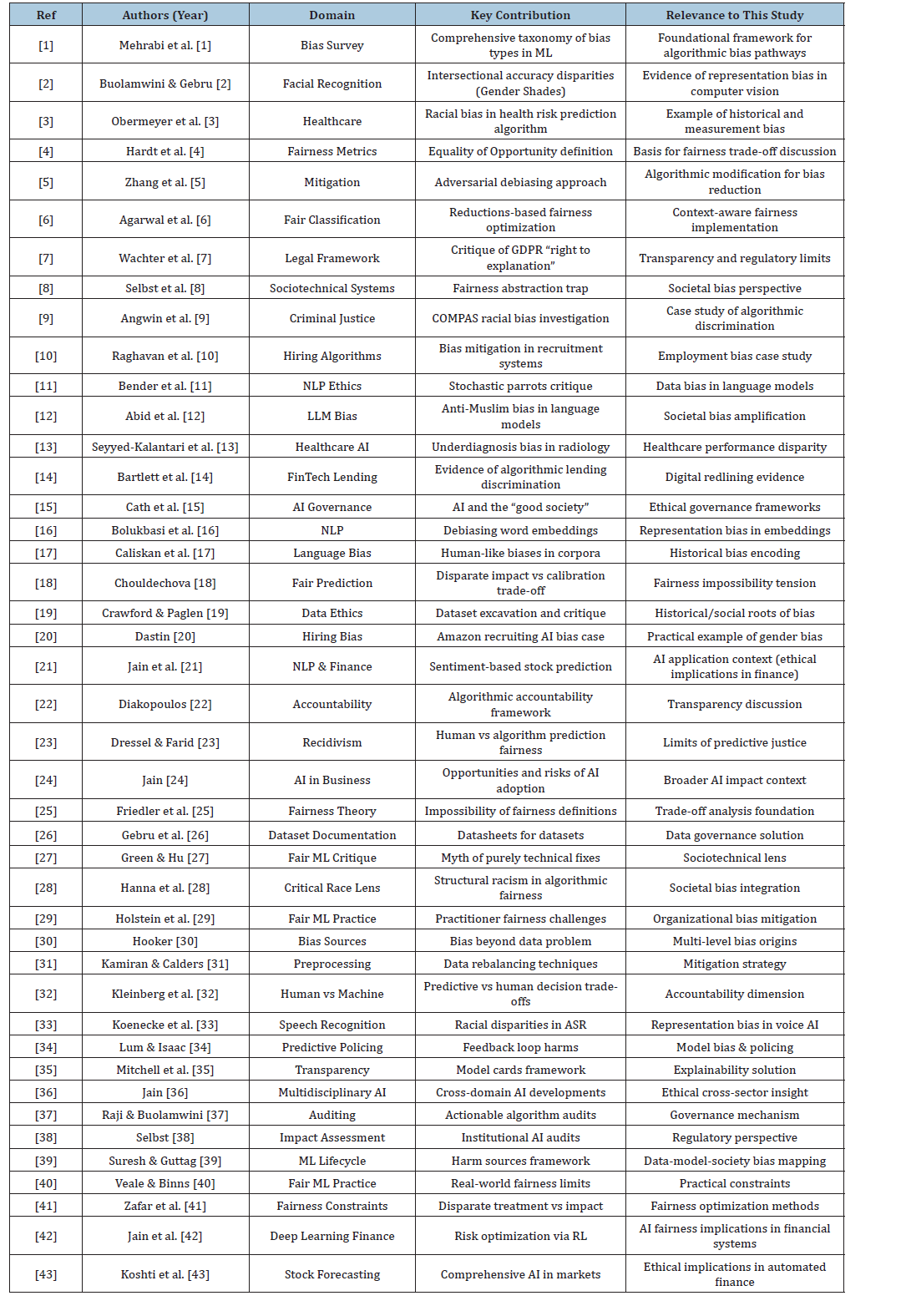

(Tables 1&2) [1-43].

Table 1:PRISMA flow summary.

Table 2:Detailed literature review.

Inclusion and exclusion criteria

Inclusion criteria:

1) Peer-reviewed journal articles or major conference

proceedings

2) Empirical evidence of AI bias

3) Formal fairness definitions or mitigation frameworks

4) Healthcare, finance, hiring, or criminal justice applications

5) Governance, regulatory, or accountability analyses

6) Studies published 2012–2026

Exclusion criteria:

1) Opinion-only blog posts (unless widely cited investigative

reports)

2) Non-AI related bias literature

3) Non-technical summaries without conceptual

contribution

Thematic synthesis of included studies

Theme 1: Foundations and taxonomies of algorithmic bias

Studies: [1,16,17,25,30,39]

These works provide theoretical grounding:

a) Mehrabi et al. [1] propose a structured taxonomy of bias

types.

b) Bolukbasi et al. [16] and Caliskan et al. [17] demonstrate

bias in word embeddings.

c) Friedler et al. [25] prove fairness impossibility constraints.

d) Hooker [30] argues bias extends beyond data.

e) Suresh & Guttag [39] map harm across ML lifecycle.

Synthesis Insight: Bias emerges across data, modelling, deployment, and societal layers-not merely from skewed datasets.

Theme 2: Criminal justice & predictive policing

Studies: [9,23,32,34]

a) Angwin et al. [9] exposed racial bias in COMPAS.

b) Dressel & Farid [23] compared algorithmic vs human

predictions.

c) Lum & Isaac [34] revealed predictive policing feedback

loops.

d) Kleinberg et al. [32] explored trade-offs between human

and machine decisions.

Synthesis Insight: Justice systems face fairness trade-offs where calibration and error parity cannot coexist simultaneously.

Theme 3: Healthcare AI bias

Studies: [3,13]

a. Obermeyer et al. [3] demonstrated cost-based proxy bias

in risk prediction.

b. Seyyed-Kalantari et al. [13] showed underdiagnosis bias

in radiology AI.

Synthesis Insight: Healthcare AI bias stems largely from flawed

proxies and demographic underrepresentation.

Theme 4: Employment & hiring algorithms

Studies: [10,20]

1) Dastin [20] documented Amazon’s biased recruiting tool.

2) Raghavan et al. [10] examined mitigation in hiring

systems.

Synthesis Insight: Historical employment data encodes gender bias; removing protected attributes does not eliminate proxy discrimination.

Theme 5: Financial & lending discrimination

Studies: [14,21,42,43]

a) Bartlett et al. [14] empirically demonstrated FinTech

discrimination.

b) Jain et al. [21,42,43] explore AI in finance, highlighting

implications for automated risk modelling.

Synthesis Insight: Digital redlining persists through proxy variables like ZIP codes and alternative credit data.

Theme 6: Fairness definitions & trade-offs

Studies: [4,18,25,41]

a) Hardt et al. [4] formalized Equality of Opportunity.

b) Chouldechova [18] highlighted calibration trade-offs.

c) Zafar et al. [41] proposed fairness constraints.

Synthesis Insight: Mathematical fairness definitions are mutually incompatible under differing base rates.

Theme 7: Mitigation strategies

Studies: [5,6,31,29]

a) Zhang et al. [5] introduced adversarial debiasing.

b) Agarwal et al. [6] proposed reductions approach.

c) Kamiran & Calders [31] developed preprocessing

techniques.

d) Holstein et al. [29] documented practitioner fairness

challenges.

Synthesis Insight: Technical mitigation reduces bias but often decreases accuracy and does not address structural causes.

Theme 8: Governance, regulation & accountability

Studies: [7,15,22,37,38,40]

a) Wachter et al. [7] critique GDPR explainability.

b) Cath et al. [15] discuss AI governance.

c) Raji & Buolamwini [37] advocate algorithm auditing.

d) Selbst [38] proposes institutional impact assessments.

Synthesis Insight: Regulatory frameworks exist but enforcement remains limited; auditing is emerging as best practice.

Theme 9: Sociotechnical & critical perspectives

Studies: [8,19,27,28]

a) Selbst et al. [8] introduce abstraction trap.

b) Crawford & Paglen [19] critique dataset genealogy.

c) Hanna et al. [28] integrate critical race theory.

Synthesis Insight: Bias must be understood as structural and sociotechnical-not purely computational.

Theme 10: Transparency & explainability

Studies: [22,35]

a) Diakopoulos [22] defines algorithmic accountability.

b) Mitchell et al. [35] introduce model cards.

Synthesis Insight: Documentation frameworks improve transparency but require institutional enforcement.

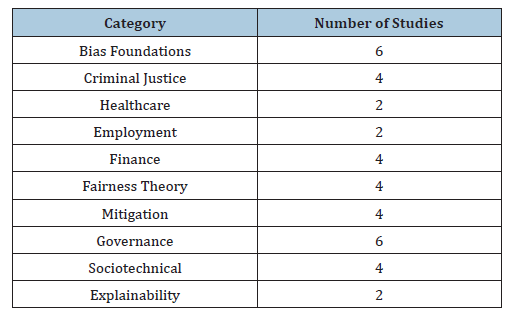

Cross-theme quantitative trends

(Table 3)

Table 3:(Table 3)

Critical Analysis and Discussion

The preceding sections have examined algorithmic bias from technical, ethical, sociological, and regulatory perspectives. This section moves beyond descriptive synthesis to provide a critical evaluation of patterns across studies, identify theoretical tensions, assess practical implications, and highlight research gaps and emerging challenges.

Cross-study comparison

A comparative analysis of the reviewed literature reveals strong convergence across domains-healthcare [3,13], criminal justice [9,23,34], finance [14], and employment [10,20]-in identifying three dominant pathways of bias: data bias, model bias, and societal bias. Despite differences in application contexts, the structural mechanisms are strikingly similar

In healthcare, bias frequently originates from flawed proxy variables (e.g., healthcare cost as a proxy for need [3]) and underrepresentation in clinical datasets [13]. In criminal justice, predictive tools inherit historical arrest disparities [9,34]. Financial algorithms reproduce digital redlining via proxy variables such as ZIP codes [14]. Hiring systems encode historical workforce imbalances [20]. Across domains, biased outcomes are rarely isolated incidents but predictable consequences of historically skewed data combined with optimization objectives that prioritize efficiency over equity

Mitigation research demonstrates mixed results. Preprocessing techniques [31], adversarial debiasing [5], and fairness-constrained optimization [6,41] reduce measurable disparities, yet empirical studies consistently show trade-offs in predictive accuracy or unintended side effects. Governance-oriented approaches such as auditing [37], impact assessments [38], and dataset documentation frameworks [26] improve transparency but remain weakly enforced in practice.

Collectively, the literature indicates that bias is systemic rather than anomalous, embedded within the lifecycle of AI development rather than confined to isolated modelling decisions.

Patterns and trends

Cross-domain literature reveals a clear evolution in how algorithmic bias is understood and addressed. Earlier studies largely treated bias as a statistical imbalance within datasets, whereas recent research adopts a sociotechnical perspective, recognizing it as a reflection of deeper institutional and structural inequalities. Mathematical analyses of fairness have further highlighted that competing fairness criteria cannot always be satisfied simultaneously, shifting the conversation from eliminating bias altogether to determining which fairness definitions should be prioritized in specific contexts. There is also a growing emphasis on transparency through explainability and documentation frameworks to strengthen accountability in AI systems. Highstakes sectors such as healthcare and criminal justice have received increased attention due to the potentially irreversible harm caused by biased decision-making, resulting in heightened regulatory scrutiny. Despite the expansion of policy frameworks, enforcement and institutional auditing mechanisms remain inconsistent and lack widespread adoption. Overall, the field has progressed from merely identifying bias to confronting broader governance and ethical integration challenges in AI deployment.

Theoretical tensions

Several unresolved theoretical tensions continue to shape the discourse on algorithmic fairness. A key challenge lies in balancing fairness with accuracy, as fairness-aware models often compromise predictive performance-particularly in high-risk applications such as medical diagnostics. Another tension emerges between equality of outcome and equality of opportunity, reflecting deeper philosophical disagreements about whether fairness should be achieved through redistributive adjustments or by preserving qualification-based thresholds. Additionally, while machine learning systems function through mathematical processes, their reliance on historically biased data challenges assumptions of technical neutrality and limits the effectiveness of purely computational solutions in addressing structural inequalities. Finally, increasing demands for transparency through explainability often conflict with corporate claims of proprietary protection, creating friction between accountability and commercial interests. Together, these tensions highlight that achieving algorithmic fairness extends beyond technical optimization and requires ethical deliberation and policy-level intervention.

Practical implications

The reviewed literature carries significant implications for AI

development and governance:

1. Lifecycle auditing is essential: Bias detection must extend

beyond model outputs to dataset sourcing, labeling practices,

and deployment contexts.

2. Interdisciplinary collaboration is required: Technical

teams alone cannot anticipate societal harms. Engagement with

ethicists, legal scholars, and affected communities improves

fairness detection [28,29].

3. Continuous monitoring over one-time fixes: Bias

mitigation should be treated as an ongoing process rather than

a pre-deployment checkpoint.

4. Regulatory enforcement must strengthen: Voluntary

compliance mechanisms are insufficient. Formalized auditing

requirements and institutional accountability are necessary.

5. Healthcare AI requires subgroup evaluation metrics:

Performance must be disaggregated across demographic

groups to prevent hidden disparities.

Practical implementation demands balancing technical feasibility, institutional responsibility, and ethical accountability.

Research gaps

Despite extensive scholarship, several critical gaps remain:

1. Limited longitudinal studies: Few studies evaluate

fairness outcomes post-deployment over extended periods.

2. Insufficient interdisciplinary evaluation frameworks:

Existing metrics emphasize statistical fairness while

underexamining lived experiences.

3. Scarcity of empirical governance assessments: Regulatory

impact is rarely measured quantitatively.

4. Underexplored bias in emerging generative AI systems:

Large language models and multimodal AI introduce new bias

dynamics not yet fully mapped.

5. Global south representation deficit: Most fairness studies

rely on Western datasets, limiting generalizability.

Addressing these gaps requires expanded methodological diversity and broader demographic inclusion.

Emerging challenges

As AI systems become more autonomous and integrated into

public infrastructure, new challenges are emerging:

1. Feedback loop amplification: Automated systems that

retrain on their own outputs risk compounding bias.

2. Cross-System interaction effects: Bias may propagate

across interconnected AI systems.

3. Regulatory fragmentation: Inconsistent international

policies create uneven accountability landscapes.

4. Explainability in deep neural networks: Increasing model

complexity complicates interpretability.

5. Economic incentive misalignment: Profit-driven

deployment may outpace fairness safeguards.

The trajectory of AI development suggests that fairness will become progressively more complex rather than simpler

Synthesis

The collective evidence indicates that algorithmic bias is not an accidental flaw but an emergent property of sociotechnical systems operating within historically unequal societies. Technical mitigation strategies provide measurable improvements but cannot independently resolve structural inequities. Theoretical trade-offs in fairness definitions highlight the normative dimensions of AI governance, while regulatory mechanisms remain in early stages of maturity.

Accordingly, responsible AI development requires an integrated approach that combines technical innovation, ethical reflection, institutional accountability, and policy enforcement. This critical synthesis moves the discussion beyond identifying bias toward understanding the systemic conditions under which it persists.

Future Directions

The preceding analysis demonstrates that algorithmic bias is a structural and lifecycle-wide phenomenon that resists purely technical correction. While significant advances have been made in fairness-aware modelling, auditing frameworks, and regulatory initiatives, emerging AI paradigms introduce new layers of complexity. This section outlines forward-looking research trajectories necessary for advancing equitable and accountable AI systems.

From static fairness to dynamic fairness

Most current fairness research evaluates models at a single deployment point. However, real-world AI systems evolve continuously through retraining, data updates, and feedback loops. Future research must move toward dynamic fairness evaluation frameworks, capable of monitoring bias longitudinally.

This includes:

a. Real-time subgroup performance auditing

b. Drift detection mechanisms for demographic disparities

c. Adaptive fairness constraints responsive to changing

population distributions

Without dynamic oversight, even initially fair models may diverge over time.

Lifecycle-integrated fairness engineering

Existing mitigation approaches frequently intervene at isolated stages-preprocessing, in-processing, or post-processing. Future systems must integrate fairness across the entire machine learning lifecycle, including:

a. Ethical dataset design (expanding dataset genealogy and

documentation standards)

b. Participatory data collection involving affected

communities

c. Bias-aware model architecture design

d. Post-deployment monitoring and institutional

accountability

Embedding fairness as a design principle rather than a corrective measure represents a critical paradigm shift.

Advancing fairness metrics beyond statistical parity

Mathematical fairness definitions such as demographic parity and equal opportunity provide necessary but insufficient tools. Future scholarship must explore:

A. Context-sensitive fairness definitions tailored to domainspecific

harms

B. Harm-based evaluation metrics grounded in lived

experiences

C. Intersectional fairness assessments that move beyond

binary demographic categories

Theoretical work should increasingly bridge computational definitions with normative ethical theory, ensuring alignment between mathematical criteria and societal values.

Governance innovation and institutional accountability

Regulatory frameworks remain fragmented and often reactive.

Future governance research should focus on:

a. Quantitative evaluation of regulatory impact

b. Standardized international auditing protocols

c. Incentive structures aligning corporate performance with

fairness outcomes

d. Executive liability and enforceable accountability

mechanisms

Institutional research must also examine how organizational culture, power dynamics, and economic incentives influence fairness outcomes.

Fairness in generative and foundation models

Emerging generative AI systems and large-scale foundation

models introduce new fairness challenges:

a) Bias amplification through large pretraining corpora

b) Cross-modal propagation of stereotypes

c) Emergent discriminatory behaviours in autonomous

systems

d) Reinforcement learning feedback loops that encode user

biases

Future research must expand fairness evaluation beyond classification tasks to include generative outputs, recommendation systems, and multimodal AI environments.

Global and cross-cultural perspectives

Current fairness research is disproportionately centered on

Western datasets and regulatory contexts. There is a pressing need

for:

a) Inclusion of Global South datasets and sociopolitical

contexts

b) Cross-cultural fairness evaluation

c) Examination of bias in multilingual and low-resource

language systems

d) Comparative analysis of international governance models

Without broader representation, fairness frameworks risk replicating epistemic inequalities at a global scale.

Human-AI collaboration models

Rather than positioning AI as a replacement for human

judgment, future research should explore hybrid models where

human oversight complements algorithmic decision-making. Key

questions include:

a) When should human override mechanisms be mandatory?

b) How can decision-support systems avoid automation

bias?

c) What training is required for human operators to interpret

AI outputs responsibly?

Understanding these dynamics is essential to prevent blind reliance on algorithmic authority.

Economic and structural incentive realignment

A persistent barrier to fairness adoption lies in economic

misalignment. Future scholarship should examine:

a) Market incentives that discourage fairness investment

b) Economic cost-benefit models of bias mitigation

c) Regulatory penalties that internalize societal harms

Fairness must become economically rational for organizations rather than purely reputational.

Toward Ethical AI as public infrastructure

As AI increasingly shapes public services-healthcare, education, finance, and governance-it must be conceptualized as public infrastructure rather than private software. Future research should investigate:

a) Public-sector auditing frameworks

b) Open-source transparency models

c) Democratic participation in AI system design

Reframing AI governance in infrastructural terms may provide stronger accountability mechanisms.

Synthesis of future trajectory

Future fairness research must be interdisciplinary, globally inclusive, lifecycle-aware, and governance-integrated. The next generation of AI ethics scholarship should prioritize structural reform alongside technical innovation. Only by aligning computational design with institutional accountability and social justice principles can AI systems evolve toward equitable and trustworthy deployment.

Conclusion

This review examined algorithmic bias through technical, ethical, historical, and regulatory perspectives, demonstrating that bias is not an isolated flaw but an emergent property of sociotechnical systems rooted in historical inequalities. Evidence across healthcare, criminal justice, finance, and employment shows that AI systems often replicate and amplify existing disparities. Fairness research further reveals inherent trade-offs between competing equity definitions, highlighting the normative nature of algorithmic decision-making. While mitigation strategies-such as preprocessing, adversarial debiasing, auditing, and regulation can reduce disparities, they are insufficient in isolation. Fairness cannot be engineered solely at the algorithmic level; it is shaped by data provenance, institutional incentives, governance structures, and broader power dynamics. As AI becomes embedded in critical infrastructures, ensuring fairness requires lifecycle integration, interdisciplinary collaboration, enforceable accountability, and global inclusivity. The central challenge is not merely improving accuracy, but aligning AI systems with principles of justice, accountability, and human dignity.

References

- Mehrabi N, Morstatter F, Saxena N, Lerman K, Galstyan A (2021) A survey on bias and fairness in machine learning. ACM Computing Surveys 54(6): 1-35.

- Buolamwini J, Gebru T (2018) Gender shades: Intersectional accuracy disparities in commercial gender classification. Proceedings of the 1st Conference on Fairness, Accountability and Transparency, PMLR, USA, 81: 77-91.

- Obermeyer Z, Powers B, Vogeli C, Mullainathan S (2019) Dissecting racial bias in an algorithm used to manage the health of populations. Science 366(6464): 447-453.

- Hardt M, Price E, Srebro N (2016) Equality of opportunity in supervised learning. Proceedings of the 30th International Conference on Neural Information Processing Systems, USA, 29: 3323-3331.

- Zhang BH, Lemoine B, Mitchell M (2018) Mitigating unwanted biases with adversarial learning. Proceedings of the 2018 AAAI/ACM Conference on AI, Ethics, and Society, USA, pp: 335-340.

- Agarwal A, Beygelzimer A, Dudík M, Langford J, Wallach H (2018) A reductions approach to fair classification. Proceedings of the 35th International Conference on Machine Learning, PMLR, USA, 80: 60-69.

- Wachter S, Mittelstadt B, Floridi L (2017) Why a right to explanation of automated decision-making does not exist in the GDPR. International Data Privacy Law 7(2): 76-99.

- Selbst AD, Boyd D, Friedler SA, Venkatasubramanian S, Vertesi J (2019) Fairness and abstraction in sociotechnical systems. Proceedings of the Conference on Fairness, Accountability, and Transparency, USA, pp: 59-68.

- Angwin J, Larson J, Mattu S, Kirchner L (2016) Machine bias. ProPublica, USA.

- Raghavan M, Barocas S, Kleinberg J, Levy K (2020) Mitigating bias in algorithmic hiring: Evaluating claims and practices. Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, USA, pp: 469-481.

- Bender EM, Gebru T, McMillan-Major A, Shmitchell S (2021) On the dangers of stochastic parrots. Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, USA, pp: 610-623.

- Abid A, Farooqi M, Zou J (2021) Persistent anti-Muslim bias in large language models. Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society, USA, pp: 298-306.

- Seyyed-Kalantari L, Zhang H, McDermott M, Chen IY, Ghassemi M (2021) Underdiagnosis bias of artificial intelligence algorithms applied to chest radiographs in under-served patient populations. Nature Medicine 27(12): 2176-2182.

- Bartlett R, Morse A, Stanton R, Wallace N (2022) Consumer-lending discrimination in the FinTech era. Journal of Political Economy 130(2): 273-305.

- Cath C, Wachter S, Mittelstadt B, Taddeo M, Floridi L (2018) Artificial intelligence and the 'good society'. Philosophy & Technology 31(1): 157-162.

- Bolukbasi T, Chang KW, Zou J, Saligrama V, Kalai A (2016) Man is to computer programmer as woman is to homemaker? Debiasing word embeddings. Proceedings of the 30th International Conference on Neural Information Processing Systems, USA, pp: 4356-4364.

- Caliskan A, Bryson JJ, Narayanan A (2017) Semantics derived automatically from language corpora contain human-like biases. Science 356(6334): 183-186.

- Chouldechova A (2017) Fair prediction with disparate impact: A study of bias in recidivism prediction instruments. Big Data 5(2): 153-163.

- Crawford K, Paglen T (2019) Excavating AI. AI Now Institute.

- Dastin J (2018) Amazon scraps secret AI recruiting tool that showed bias against women. Reuters, UK.

- Jain R, Varshney N, Rathod K, Nagar H, Suthar R, et al. (2025) Sentiment analysis-driven stock price forecasting using Natural Language Processing (NLP) and predictive analytics. 2025 Artificial Intelligence and Smart Technologies for Sustainability Conference (AISTS), Rajkot, India, pp: 1-7.

- Diakopoulos N (2015) Algorithmic accountability. Digital Journalism 3(3): 398-415.

- Dressel J, Farid H (2018) The accuracy, fairness, and limits of predicting recidivism. Science Advances 4(1): eaao5580.

- Jain R (2023) The impact of artificial intelligence on business: Opportunities and challenges. SSRN Electronic Journal, pp: 1-4.

- Friedler SA, Scheidegger C, Venkatasubramanian S (2016) On the (im)possibility of fairness. ArXiv.

- Gebru T, Morgenstern J, Vecchione B, Vaughan JW, Wallach H, et al. (2021) Datasheets for datasets. Communications of the ACM 64(12): 86-92.

- Green B, Hu L (2018) The myth in the methodology. Workshop on Fairness, Accountability, and Transparency in Machine Learning.

- Hanna A, Denton E, Smart A, Smith-Loud J (2020) Towards a critical race methodology in algorithmic fairness. Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, USA, pp: 501-512.

- Holstein K, Wortman Vaughan J, Daumé IIIH, Dudík M, Wallach H (2019) Improving fairness in machine learning systems. Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, USA, 600: 1-16.

- Hooker S (2021) Moving beyond "algorithmic bias is a data problem". Patterns 2(4): 100241.

- Kamiran F, Calders T (2012) Data preprocessing techniques for classification without discrimination. Knowledge and Information Systems 33(1): 1-33.

- Kleinberg J, Lakkaraju H, Leskovec J, Ludwig J, Mullainathan S (2018) Human decisions and machine predictions. Quarterly Journal of Economics 133(1): 237-293.

- Koenecke A, Nam A, Lake E, Nudell J, Quartey M, et al. (2020) Racial disparities in automated speech recognition. Proceedings of the National Academy of Sciences 117(14): 7684-7689.

- Lum K, Isaac W (2016) To predict and serve? Significance 13(5): 14-19.

- Mitchell M, Wu S, Zaldivar A, Barnes P, Vasserman L, et al. (2019) Model cards for model reporting. Proceedings of the Conference on Fairness, Accountability, and Transparency, USA, pp: 220-229.

- Jain R (2025) Cutting-edge developments in science, engineering and technology: A multidisciplinary review. International Journal of Current Research in Science, Engineering & Technology 8(1): 219-225.

- Raji ID, Buolamwini J (2019) Actionable auditing: Investigating the impact of publicly naming biased performance results of commercial AI products. Proceedings of the 2019 AAAI/ACM Conference on AI, Ethics, and Society, pp: 429-435.

- Selbst AD (2021) An institutional view of algorithmic impact assessments. Harvard Journal of Law & Technology 35(1): 75-124.

- Suresh H, Guttag JV (2021) A framework for understanding sources of harm throughout the machine learning life cycle. Proceedings of the 1st ACM Conference on Equity and Access in Algorithms, Mechanisms, and Optimization, USA, 17: 1-9.

- Veale M, Binns R (2017) Fairer machine learning in the real world: Mitigating discrimination without collecting sensitive data. Big Data & Society 4(2): 205395171774353.

- Zafar MB, Valera I, Gomez Rodriguez M, Gummadi KP (2017) Fairness beyond disparate treatment & disparate impact: learning classification without disparate mistreatment. Proceedings of the 26th International Conference on World Wide Web, Geneva, Switzerland, pp: 1171-1180.

- Jain R, Varshney N, Durgarao MSP, Maurya SK, Mehta DK, et al. (2026) Deep learning-based volatility forecasting, portfolio management, and reinforcement learning-based risk optimisation. National Academy Science Letters.

- Koshti Y, Jain R, Barhaiya H, Singh RP, Gour N (2026) A Comprehensive study on stock market forecasting using AI and ML techniques. In: Kumar A, Mozar S (Eds.), Proceedings of the 7th International Conference on Communications and Cyber Physical Engineering. ICCCE 2024. Lecture Notes in Electrical Engineering, Springer, Singapore, 1466: 450-455.

© 2026 Rahul Jain. This is an open access article distributed under the terms of the Creative Commons Attribution License , which permits unrestricted use, distribution, and build upon your work non-commercially.

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

.jpg)

Editorial Board Registrations

Editorial Board Registrations Submit your Article

Submit your Article Refer a Friend

Refer a Friend Advertise With Us

Advertise With Us

.jpg)

.jpg)

.bmp)

.jpg)

.png)

.jpg)

.jpg)

.png)

.png)

.png)