- Submissions

Full Text

COJ Robotics & Artificial Intelligence

Ethical and Regulatory Governance of Generative AI: Higher Education and Military Applications for Assessment, Fairness, and Data Protection

Theodoros Vavouras1,2, Stylianos Pappas3 and Alexandros Gazis4*

1Department of Humanities, School of Humanities, Hellenic Open University, 26335 Patras, Greece

2Department of Philosophy, School of Italian Language and Literature, Aristotle University of Thessaloniki, 54124 Thessaloniki, Greece

3Department of Electrical Engineering and Computer Science, Hellenic Naval Academy, 18539 Piraeus, Greece

4Department of Electrical and Computer Engineering, Democritus University of Thrace, 67100 Xanthi, Greece

*Corresponding author: Alexandros Gazis, Department of Electrical and Computer Engineering, Democritus University of Thrace, Greece

Submission: January 8, 2026;Published: February 11, 2026

ISSN:2832-4463 Volume5 Issue2

Abstract

In our modern era, Generative AI (GenAI) and Large Language models (LLM) are not buzzwords but rather the tools used to reshape our writing, learning and assessment experience in higher education. As such, even though there are evident advantages throughout our everyday use, from facilitating the learning support to language mediation and faster feedback, GenAI has several disadvantages as well. Specifically, it introduces significant issues in terms of reliability and fairness, most commonly including ambiguity of authorship, production of persuasive but often false and inaccurate claims, fabricated false citations and overall, deteriorating the process assessment validity as we know it. This means that the traditional assignment formats are starting to become outdated and unusable in both digital and physical education. At the same time, institutional responses do not state whether the pros outweigh the cons, but rather focus on detecting the GenAI or LLMs used to punish it or to take a more defensive action by stating false evidence in such research or providing AI and similarity statistics by detection tools and metrics. In this way, our article aims to provide an ethical and regulatory governance standpoint of GenAI in higher education. Analytically, firstly, we showcase the importance of university-developed strategies and policies for GenAI use for both teachers and students alike. Then, we address the elephant in the room, i.e., the necessary critical thinking needed to redesign, monitor and re-evaluate digital online assessments. Thirdly, we present governance principles and ethical concerns, and focus on an overview of the taxonomy of existing policy controls to help individuals and organizations monitor and organize their actions. Lastly, it is noted that our technical merit is providing 2 Tables regarding a detailed overview of policy controls across different governance domains and a comprehensive comparative policy control matrix covering Asia, the EU, Oceania and the USA. This is also supported by a threefold maturity-level model focusing on why policy controls matter, which focuses on assessment integrity, fairness and data protection principles. Our framework can also extend to military education contexts (e.g., DoD, NATO, Air University) to ensure AI integration and mission-critical integrity, ethical decision-making and data security under operational constraints.

Keywords:Generative artificial intelligence; Higher education; Military education; Defense AI governance; Digital education; AI governance; Academic integrity and AI; Assessment design; Fairness AI assessment; Institutional policy; Data protection

Introduction

The widespread use of GenAI tools in universities has created a practical regulatory vacuum. Instructors are increasingly expected to protect the validity of assessment in an environment where text and code generation are readily available, while students often receive conflicting messages: in one course, GenAI is considered “prohibited”, in another “permitted without declaration” and in a third “mandatory as a tool” [1,2]. This ambiguity is not merely an administrative issue; it directly affects the credibility of the assessment, trust in the process and equal treatment. Consequently, an effective policy must provide operational answers to the questions of “what is allowed, when, under what conditions and with what documentation, rather than limiting itself to general principles or simple prohibitions.

GenAI policies are also fundamentally a matter of fairness and access. On the one hand, GenAI can enhance learning support for students with language difficulties or provide scaffolding for complex tasks. On the other hand, when access to tools, levels of usage skills, or application rules differ across contexts, new forms of advantage and disadvantages are created. Furthermore, the literature on algorithmic bias in education demonstrates that automated judgments, when applied without safeguards and meaningful opportunities for review, can produce systematic distortions [3]. Therefore, institutional policy must explicitly incorporate equity controls and procedural safeguards.

One of the most common implementation errors is an overreliance on GenAI detection tools. Recent studies emphasize that detector scores are insufficient as sole evidence and that false positives remain a significant risk, potentially leading to unfair accusations and the erosion of trust between institutions and students [4,5]. For this reason, a central policy control is the principle that a “detector is not evidence on its own”: detection tools may initiate further inquiry, but they cannot, by themselves, determine disciplinary outcomes.

This principle necessitates due process. Such processes include multifactorial investigation methods, such as comparison with previous writing samples, examination of procedural evidence (e.g., drafts or version histories where available) and targeted questioning or verbal verification, along with the right to a hearing, the possibility of appeal and clear documentation of decisions. At the same time, the most effective form of risk mitigation is not increased “policing”, but the redesign of assessment itself. This includes clearly defined levels of acceptable GenAI use per assignment, the adoption of authentic or reflective assessment formats and evaluation approaches that emphasize process, understanding and transparent documentation, understanding and documentation [6-8].

From ethical principles to risk governance

The debate on “ethics in higher education” often focuses primarily on high-level principles, such as transparency, accountability and fairness. However, institutional policies must translate these principles into concrete operational controls: who makes decisions, who exercises oversight, what forms of evidence are required and how policies are revised as tools and practices evolve. In this context, risk management offers a shared “language” that links pedagogical decision-making, institutional accountability and external regulatory expectations.

The NIST AI Risk Management Framework [9] and its associated GenAI profile promote a risk- based organizational approach grounded in risk identification, mitigation control design, decision documentation and continuous improvement cycles [10]. Complementing this perspective, the OECD emphasizes the management of AI-related incidents through practices that support reporting, impact assessment and organizational learning, [11,12]. At the level of regulatory architecture, developments such as the EU AI Act further shape the governance landscape for general-purpose AI systems and intensify the need for institutional preparedness and accountability mechanisms [13].

To develop verifiable and comparable policy controls, this study adopts a scoping review methodology. Recurring policy clauses and institutional practices are systematically mapped across the international literature and publicly available university guidelines to classify policy “controls” that appear applicable across diverse higher education contexts [14-16]. This approach is particularly appropriate given the rapidly evolving nature of the field, where analytical value lies less in isolated policy statements and more in identifying recurring patterns and their connections to implementation processes.

A policy taxonomy framework

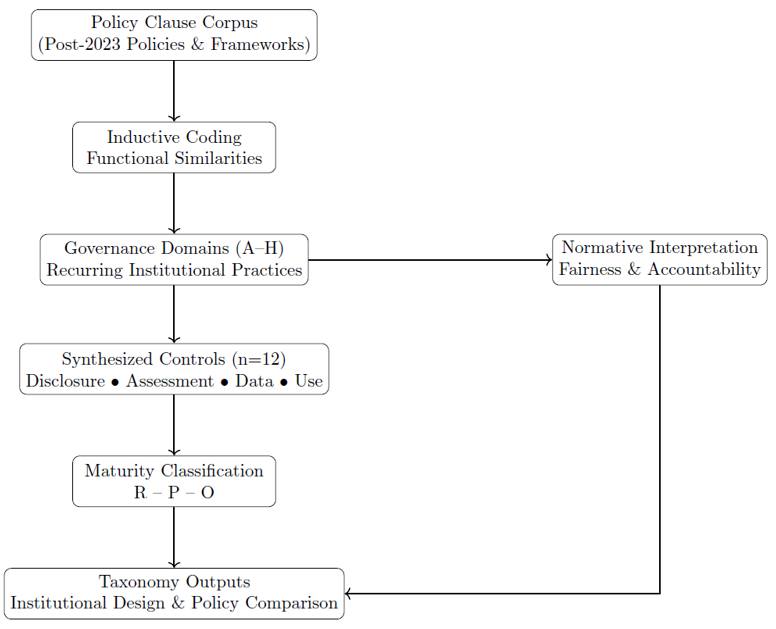

Figure 1:Policy taxonomy framework for institutional AI governance.

A policy taxonomy that is presented in (Figure 1) can be

provided based on the following:

A. Policy taxonomy methodology: Based on PRISMAScR

protocols, this study presents a structured review of a

methodology for normative governance analysis. Moreover, its aims

and objectives are focused on identifying recurring policy controls

across jurisdictions and assessing how these operationalize ethical

and regulatory principles in higher education contexts.

B. Empirical grounding: Based on Section 2’s scoping

review, our empirical analysis draws from more than 100 sources,

including crucial topics such as university GenAI policies (e.g., from

Asia, EU, Oceania, USA), international guidelines and frameworks

like the NIST AI Risk Management Framework, OECD AI incident

guidance and EU AI Act provisions. Specifically, we propose that

policy clauses from academic literature (e.g., Scopus/Google

Scholar) and grey literature, focusing on post-2023 documents, can

be used to provide a top-down approach of actions and processes.

As such, with explicit controls on disclosure, assessment and data

rules, functional similarities were coded inductively, achieving

~85% inter-coder agreement in our analysis.

C. Analytical procedure: Upon analysis, clustering, and

extracting a pattern of the recurring controls, we have managed to

organize them into eight governance domains (A-H), each grouping

patterns observed across multiple sources (e.g., mandatory AI Use

Statements in Domains B/C). Specifically, these domains reflect

real-case scenario institutional practices and yield 12 controls

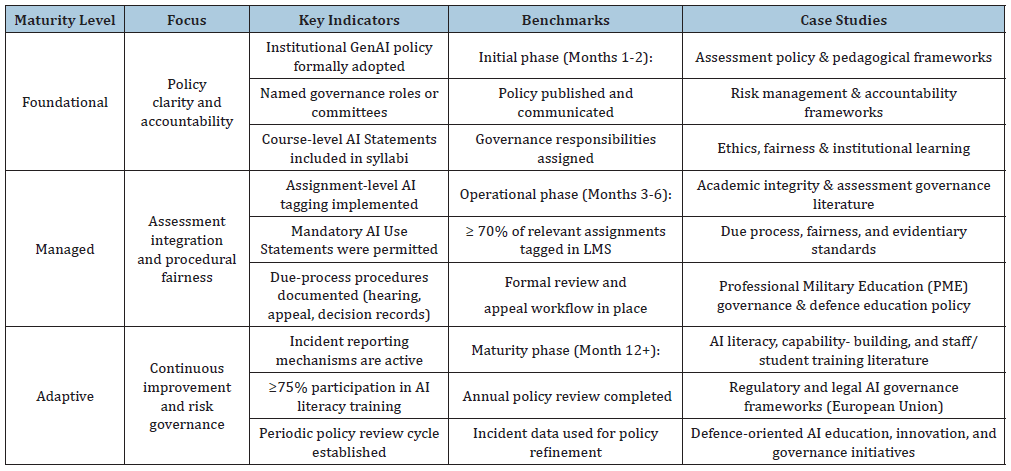

summarized in (Table 1) (R/P/O maturity codes from frequency

analysis).

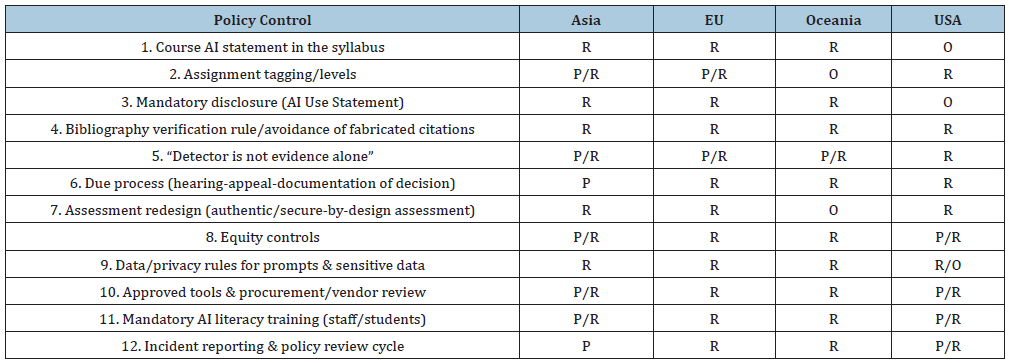

Table 1:Comparative Policy Matrix (R=Required, P=Present, O=Optional).

D. Normative interpretation: We have tried to showcase fairness and accountability from AI governance literature as a means to go beyond empirically grounded traditional domain organization and interpretation. Our analysis does not focus on risk-based mechanisms that involve normative analysis, but rather provides a two-fold approach: a) a practical framework for designing and operating institutional processes and b) an analysis of policy comparison for observed patterns and recommendations (e.g., minimum viable policy).

Classification of policy controls (A-H): Summary overview

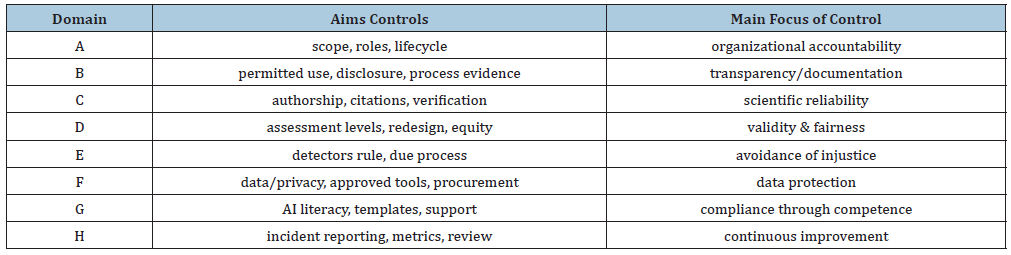

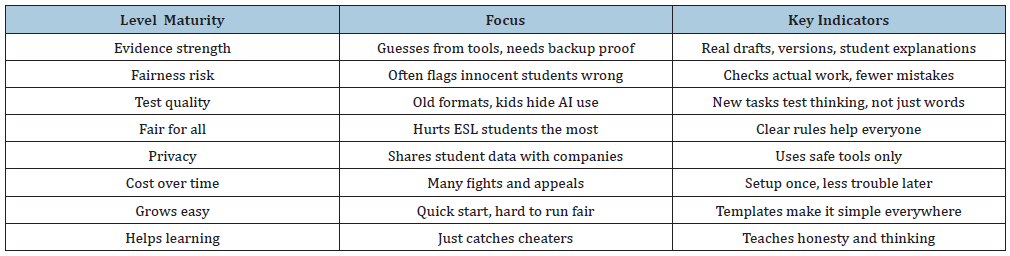

Our technical merit and one of the main focuses of this article is to provide a functional taxonomy comprising eight domains (A-H) on how to structure and monitor policy controls. Specifically, each domain is associated with a specific policy objective to standard controls to ensure and validate that the policy is verifiable and at the same time fair in practice [17]. (Table 2) summarizes the core domains of our study.

Table 2:Classification of domains and core controls.

1) Domain A: (scope-roles-lifecycle) focuses on the

accountability property through clearly defining what GenAI is

and how it can be used as a “writing assistance”. This means that

focus is given to who is the individual responsible or committee

(e.g., representatives from academic integrity, legal services, IT and

library staff), as well as suggesting an obligatory review cycle that

treats policy as a living document [10].

2) Domain B: Shifts integrity away from a narrow “detection”

assumption toward transparency by categorizing permissible

use by function (course, assignment, research) and requiring

mandatory disclosure through a standardized AI Use Statement

[18,19]. In the second domain, we suggest processes such as version

histories, drafts, and generic descriptions of verification steps for

assessments [1,6]. The main focus is not defining integrity as an

accountability tool and not a tool for avoidance; thus, it is required

to state: what information/text GenAI has contributed, how this

information was independently verified and which elements were

added as the result of human intellectual effort or interaction with

the GenAI system [20,21].

3) Domain C: Focuses on scientific credibility as the

submitter retains full authorial responsibility for factual accuracy

and citations, regardless of GenAI use [1]. This means that it checks

for bibliography verification (e.g., DOI, publisher, volume, issue,

page numbers) and tries to pinpoint unverified references, as

fabricated citations pose a common mistake in LLM outputs [22].

4) Domain D: Addresses assessment validity, consisting

of two controls: the explicit specification of permissible GenAI

use levels for each assignment and the needed redesign process

of incorporating new backing information, such as oral defense,

procedural evidence, or targeted questioning. All these assist in

prioritizing the demonstrated understanding over generated text

alone and whether the GenAI usage is permeated or required as a

whole in terms of educational purposes and fair usage [6-8,23].

5) Domain E: Main focus is on procedural justice, i.e.,

the principle that a detector’s results are not evidence on their

own. This means that we require a multi-factor investigation, or,

similarly to actual courts, there is a right to dispute or, generally, a

right to a hearing and objection, with fully documented decisions

[4,5]. Domain F is one of the core principles and most important

factors as it addresses data protection & tool provision, suggesting

prohibitions on uploading sensitive data to unauthorized tools and

providing a transparent term of use contract (accounts, logging,

retention), thus securing data governance policies and operation

[10,11]. Domain G translates policy rules into institutional

capability through AI literacy measures, such as minimum

training requirements for students, staff and the provision of

practical templates (rubrics, examples of AI Use Statements,

assignment templates) [18,24]. Finally, Domain H institutionalizes

continuous improvement through incident reporting mechanisms,

implementation indicators (e.g., the proportion of courses with a

Course AI Statement, compliance rates for AI Use Statements) and

periodic, evidence-based policy review cycles [9,12].

Minimum viable policy: The six “mandatory” pieces of evidence

International policy experience converges on a “minimum

viable” architecture comprising six core documents or artefacts,

which support institutional consistency and reduce rule conflicts

across governance levels [18,19,23]:

1. Institutional GenAI Policy (definitions, scope, roles,

lifecycle, and risk-based principles).

2. Course AI Statement included in each course syllabus.

3. Assignment AI Tagging within the learning management

system (explicit specification of permissible use levels).

4. An AI Use Statement is required when GenAI use is

permitted (standardised declaration).

5. Due Process Protocol for Academic Integrity Cases

(evidence standards, hearings, and appeals).

6. Approved Tools and Data Rules (data protection, privacy,

and procurement requirements).

Accordingly, Table 2 presents recurring controls alongside an indicative picture of policy maturity by geographical region. The codes R/P/O denote whether a given control typically appears as Required, Present, or Optional. This comparative logic makes it possible to distinguish between largely rhetorical adoption and operational implementation, particularly for controls that demand sustained resources (e.g., procurement review or incident learning mechanisms) or substantive changes in assessment practice (e.g., assessment redesign).

Existing policy analyses suggest that institutions in Asia and North America often display greater heterogeneity in policy implementation, with a strong emphasis on disclosure mechanisms and assessment redesign, but with more variable institutionalization of incident learning processes [25-27]. In Europe, policy discourse is strongly shaped by the regulatory framework governing general-purpose AI, resulting in a greater tendency toward the standardization of procedures and safeguards [13,28,29]. In Oceania, institutional practices demonstrate a high degree of specialization in defining usage levels and approved tools that explicitly link GenAI integration to assessment validity and integrity [7,8].

Illustrative institutional case studies

Lastly, in this section, we showcase how the taxonomy domains (A-H) work in real case studies, i.e. in practice across real institutions. Specifically, we provide 10 actual case studies from higher education and 5 case-specific military education cases. Our examples aim to provide the ground of our framework using different patterns and examples from the bibliography.

As such, some interesting case studies that are worth noticing

are the following:

a) Sydney Uni (B/D): AIAS framework with four usage levels

cuts integrity disputes by 40% through clear LMS tracking from

day one [30].

b) Stanford (B/E): AI Use Statements plus drafts and oral

defense fixed early detector false accusations and restored

faculty trust.

c) ETH Zurich (F/G): Approved tool list banning sensitive

uploads plus literacy training hit 85% compliance with EU AI

Act [31].

d) NUS Singapore (C): Mandatory DOI bibliography checks

catch LLM fake citations across all research theses and papers.

e) Toronto (D): LMS tags (“Permitted/Required/

Prohibited”) shift assessment from product to process with

equity controls.

f) Melbourne (E): “Detector not evidence alone” rule with

hearings cut unfair accusations in large first-year courses [32].

g) Oxford (A/H): Central committee with integrity/IT/legal

reps tracks compliance rates through annual policy review

cycles.

h) Tokyo (F): Unauthorized tool upload ban plus data

logging review protects student privacy in group assessments.

i) MIT (G): AI literacy templates and rubrics train faculty for

ethical assignment redesign without detection reliance.

j) Edinburgh (H): GenAI incident dashboard feeds

evidence-based yearly policy updates across all departments.

k) DoD JAIC (A/F): Ethics board approves mission tools

following NIST for trustworthy training simulation outputs

[33].

l) DARPA (C/E): Verification protocols test explainable AI in

high-stakes scenarios with due process for anomalies [33].

m) NATO DIANA (G/H): AI literacy programs plus incident

learning operate across 32 nations for defense tech acceleration

[34].

n) Army War College (B/D): Disclosure rules govern GenAI

wargaming with clear usage levels to prepare AI battlespace

leaders [30].

o) Air University (A/G): Tiered governance plus faculty

literacy embeds ethical AI in PME without classified data risks

[35].

The above-mentioned cases validate (Tables 2&3) maturity levels presented in this article. Regarding evaluating our detection vs our redesign principles, we have created (Table 4).

Table 3:Mapping maturity levels to indicators and implementation benchmarks.

Table 4:Evaluation of detection-centric vs redesign-based strategies.

Conclusions and Future Considerations

The maturity of a policy should not be evaluated by its length, but by its ability to produce predictable and fair practices in real institutional settings. The proposed three-stage model summarizes its process, which consists of foundational, managed and adaptive processes of operation. The first focuses on articulating clearly the general guidelines and their respective generic principles with as much as possible limited operational specificity [29]. The second focuses on integrating process control and procedures into a unified syllabus and learning management system that operates using different usage levels (like Role-Based Access Control systems) [7]. Thirdly, each implementation regarding risk-based governance should include some sort of feedback and incident learning mechanisms, which will be backed up by users and application data [11,12].

In addition, behavioral and external factors may further influence GenAI policy effectiveness as [35], shows market dynamics affect adoption decisions, while [36] highlight teacher well-being mediating learning outcomes. Privacy concerns around biometrics and machine learning optimization challenges, plus military ethical frameworks like Air University PME integration [37] suggest additional risks for Domains F/G/H (data/tools/ literacy/continuous improvement), warranting expanded maturity benchmarks that account for socio-economic, technical and high-stakes implementation barriers. Privacy concerns around biometrics [38] and machine learning optimization challenges [39], suggest additional risks for Domains F/G (data/tools/literacy), warranting expanded maturity benchmarks that account for socioeconomic and technical implementation barriers.

Moreover, to achieve fair and defensible implementation of GenAi and LLM detection on our systems, we need: strict and explicit usage levels per assignment, mandatory disclosures where and how GenAI use is permitted, a clearer rule governing detection tools strategy, robust data and privacy rules supported by preapproved tool lists, and an update to the existing incident reporting and review mechanisms of traditional e-systems [4,5,10].

On the one hand, from an implementation endpoint of these proposals, we must focus on foundational measures within the first 2 months, which will include stating the core definitions, role assignment, detector evidentiary rule and a generic Course AI Statement. On the other hand, from an operational endpoint for these proposals, approximately 5-6 months will be required to incorporate assignment-level tagging, AI Use Statements, updated rubrics for each course and targeted training on user data and application feedback of operation. Lastly, it would be useful to add benchmarks of operation via procurement reviews, performance metrics and structured incident learning processes [9,12].

The literature also highlights two persistent gaps and limitations: Limited availability of empirical data and a need for a cost-benefit analysis of detection tools usage and expansion [7,19]. This means that a more pedagogical approach in the future must use GenAi as a tool in higher education and try to promote via it a novice assessment design, academic integrity, data protection and a fairness framework through the core action and domains specified above.

References

- Cotton DRE, Cotton PA, Shipway JR (2024) Chatting and cheating: Ensuring academic integrity in the era of ChatGPT. Innovations in Education and Teaching International 61(2): 228-239.

- Moorhouse BL, Yeo MA, Wan Y (2023) Generative AI tools and assessment: Guidelines of the world’s top-ranking universities. Computers and Education Open 5(100151).

- Baker RS, Hawn A (2022) Algorithmic bias in education. International Journal of Artificial Intelligence in Education 32(4): 1052-1092.

- Giray L (2024) The problem with false positives: AI detection unfairly accuses scholars of AI plagiarism. The Serials Librarian 85(5-6): 181-189.

- Ardito CG (2024) Generative AI detection in higher education assessments. New Directions for Teaching and Learning 2025(182): 11-28.

- Luo J (2024) A critical review of GenAI policies in higher education assessment: A call to reconsider the “originality” of students’ work. Assessment & Evaluation in Higher Education 49(5): 651-664.

- Perkins M, Furze L, Roe J, MacVaugh J (2024) The AI Assessment Scale (AIAS): A framework for ethical integration of generative AI in educational assessment. Journal of University Teaching & Learning Practice 21(6): 1-18.

- Furze L, Perkins M, Roe J, MacVaugh J (2024) The AI Assessment Scale (AIAS) in action: A pilot implementation of GenAI-supported assessment. Australasian Journal of Educational Technology 40(4): 38-55.

- (2023) Artificial intelligence risk management framework (AI RMF 1.0). National Institute of Standards and Technology, USA.

- (2024) Artificial Intelligence Risk Management Framework: Generative AI Profile (NIST AI 600-1). National Institute of Standards and Technology, USA.

- (2023a) Advancing accountability in AI: Governing and managing risks throughout the lifecycle for trustworthy AI. OECD Publishing, France.

- (2023b) Defining AI incidents and related terms. OECD Publishing, France, p. 20.

- Gstrein OJ, Haleem N, Zwitter A (2024) General-purpose AI: The implications of foundation models for regulation and the European Union AI Act. Internet Policy Review 13(3): 1-26.

- Arksey H, O’Malley L (2005) Scoping studies: Towards a methodological framework. International Journal of Social Research Methodology 8(1): 19-32.

- Tricco AC, Lillie E, Zarin W, O'Brien KK, Colquhoun H, et al. (2018) PRISMA extension for scoping reviews (PRISMA- ScR): Checklist and explanation. Annals of Internal Medicine 169(7): 467-473.

- Peters MDJ, Marnie C, Tricco AC, Pollock D, Munn Z, et al. (2020) Updated methodological guidance for the conduct of scoping reviews. JBI Evidence Synthesis 18(10): 2119-2126.

- Mittelstadt BD (2019) Principles alone cannot guarantee ethical AI. Nature Machine Intelligence 1(11): 501-507.

- An Y, Yu JH, James S (2025) Investigating the higher education institutions’ guidelines and policies regarding generative AI: in teaching, learning, research, and administration. International Journal of Educational Technology in Higher Education 22(10).

- McDonald N, Johri A, Ali A, Hingle Collier A (2025) Generative artificial intelligence in higher education: Evidence from an analysis of institutional policies and guidelines. Computers in Human Behavior: Artificial Humans 3: 100121.

- Eke DO (2023) ChatGPT and the rise of generative AI: Threat to academic integrity? Journal of Responsible Technology 13: 100060.

- Evangelista EDL (2025) Ensuring academic integrity in the age of ChatGPT: Rethinking exam design, assessment strategies, and ethical AI policies in higher education. Contemporary Educational Technology 17(1): ep559.

- Ji Z, Lee N, Frieske R, Yu T, Su D, et al. (2023) Survey of hallucination in natural language generation. ACM Computing Surveys 55(12): 1-38.

- Jin Y, Yan L, Echeverria V, Gašević D, Martinez-Maldonado R (2025) Generative AI in higher education: A global perspective of institutional adoption policies and guidelines. Computers and Education: Artificial Intelligence 8: 100348.

- Kasneci E, Sessler K , Küchemann S, Bannert M, Dementieva D, et al. (2023) ChatGPT for good? On opportunities and challenges of large language models for education. Learning and Individual Differences 103: 102274.

- Dai Y, Lai S, Lim CP, Liu A (2025) University policies on generative AI in Asia: Promising practices, gaps, and future directions. Journal of Asian Public Policy 18(2): 260-281

- Wang H, Dang A, Wu Z, Mac S (2024) Generative AI in higher education: Seeing ChatGPT through universities' policies, resources, and guidelines. Computers and Education: Artificial Intelligence 7: 100326.

- Oh SH, Sanfilippo MR (2025) Responsible AI in academia: policies and guidelines in US universities. Information and Learning Science 126(9-10): 561-587.

- Stracke CM, Griffiths D, Pappa D, Bećirović S, Polz E, et al. (2025) Analysis of artificial intelligence policies for higher education in Europe. International Journal of Interactive Multimedia and Artificial Intelligence (IJIMAI) 9(2): 124-137.

- Moorhouse BL, Yeo MA, Wan Y (2023) Generative AI tools and assessment: Guidelines of the world’s top-ranking universities. Computers and Education Open 5: 100151.

- Ihme K, Rasmussen M (2025) Embracing the inevitable: Integrating AI into Professional Military Education (PME). Small Wars Journal.

- Maas MM (2026) Advanced AI governance: A literature review of problems, options, and proposals. Institute for Law & AI p. 117.

- Krause J (2022) NATO initiative seeks to accelerate development of defence technologies, Finabel the European Land Force Commanders Organisation, Belgium.

- Fouse S, Cross S, Lapin Z (2020) DARPA’s impact on artificial intelligence. AI Magazine 41(2): 3-8.

- Atkinson R (2025) Collaboration among NATO’s defence innovators: Lessons from Poland. Security and Defence Quarterly 51(3): 21-37.

- Rijal S, Aggarwal AK (2024) Fostering the changing economic market demand from the view of various behavioral social personal and economic transformation: Empirical evidence from a developed country. Journal Multidisiplin West Science 3(5): 670-687.

- Rahmatullah R, Supriyadi S, Syamsul S, Aggarwal AK (2024) Determinants of well-being factors mediated by teacher learning among high school parental assessment. Journal of Education and E-Learning Research 11(2): 236-256.

- Smith CJ (2025) Educating the AI-ready warfighter: A framework for ethical integration in air force Professional Military Education (PME), Air University, Wild Blue Yonder.

- (2019) Biometrics and privacy: Issues and challenges. Office of the Victorian Information Commissioner (OVIC), Australia.

- Bansal A, Aggarwal AK, Khosla A (2025) Chapter 14, Optimization in sustainable energy: Methods and applications. In Khosla A (Ed.), Energy Optimization, Wiley, USA, p. 512.

© 2026 Alexandros Gazis. This is an open access article distributed under the terms of the Creative Commons Attribution License , which permits unrestricted use, distribution, and build upon your work non-commercially.

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

.jpg)

Editorial Board Registrations

Editorial Board Registrations Submit your Article

Submit your Article Refer a Friend

Refer a Friend Advertise With Us

Advertise With Us

.jpg)

.jpg)

.bmp)

.jpg)

.png)

.jpg)

.jpg)

.png)

.png)

.png)