- Submissions

Full Text

Evolutions in Mechanical Engineering

Cognitive Automation and its Impact on Additive Manufacturing

Albert Jones1*, Zhuo Yang2 and Yan Lu1

1Systems Integration Division, National Institute of Science and Technology, USA

2Department of Mechanical and Industrial Engineering,University of Massachusetts, USA

*Corresponding author: Albert Jones,Systems Integration Division, National Institute of Science and Technology, Gaithersburg, MD20899, USA

Submission: September 03, 2020;Published: October15, 2020

ISSN 2640-9690 Volume3 Issue2

Abstract

The English word manufacturing firstly appeared in 1683 and it was derived from Latin manu factus, meaning making by hand. For more than thousands of years now, and four Industrial Revolutions, the physical, and mostly mechanical processes, associated with making things have evolved substantially. That evolution, which we call physical automation, essentially changes manufacturing from makingby- humans hand only to making-by-machine only.A similar evolution is taking place with the cognitive processes that occur as part of design, engineering, the manufacturing, control, and inspection.We call that evolution cognitive automation.In this paper, we provide a history of both physical and cognitive automation.That history evolves based on summaries of the four industrial Revolutions.The focus of this paper is on the current state of cognitive automation associated with controlling Additive Manufacturing processes. We focus specifically on how cognitive automation has changed the control of those processes.

Keywords: Cognition;Evolution;Automation;Industrial evolution;Additive manufacturing

Introduction: The Four Industrial Revolutions

Manufacturing involves two major processes: physical and cognitive. To date, there have been four industrial revolutions and each one has focused on creating technologies that automate those processes [1]. The first two revolutions focused exclusively on new types of machines that could automate physical processes only. The third and four resolutions have focused on new technologies that can automate both types of processes.

The first industrial revolution

The First Industrial Revolution, which occurred from 1760 to about 1840, resulted in the first transition from manufacturing by hand to manufacturing by mechanical processes (machines), which could operate “automatically” using either water or steam power [2]. But the pre-, during-, and post-manufacturing actives, which were all cognitive activities, were done by humans. But these machines needed maintenance, which was also done by humans. Fortunately, the implementation Eli Whitney’s the concept of interchangeable parts made manufacturing and repairing existing machines and products easier. And the need for “physical” standards to enable that interchangeability possible, first arose (Figure 1).

Figure 1: The four industrial revolutions [3].

The second industrial revolution

The Second Industrial Revolution, also known as the Technological Revolution, occurred from the late 19th century into the early 20th century (approximately 1860 to 1910) [4]. This second revolution resulted in new and major advances in technologies. Technologies that could automate a wide range of the emerging, individual, manufacturing technologies of that era. Additionally, these automated processes, along with the proper physical infrastructure and physical standards, led to the creation of large factories. From an economy’s perspective, those physical standards allowed individual factories to specialize at the component level.

Mass production ensued. For example, according to [5] mass production of the Ford Model T used 32,000 machine tools. This was possible only because electricity became the dominant energy source, and it provided the flexibility to move machines in the factory to optimize the workflow. This increased flexibility and physical automation also allowed owners to operate their factories with an increasingly lower-skilled, labor force. In fact, and not surprisingly, their skill levels declined relative to the craftsman of the First Industrial Revolution. The former, highly skilled, human manufactures were becoming, largely, human controllers of the automated machines. But again, as we articulated earlier, all cognitive tasks were still performed by humans.

Clearly, at the end of the Second Industrial Revolution, physical automation was advancing rapidly and widely across the entire global economy. Technologies to automate, mechanical processes (electrified machines) were popping up everywhere across a wide spectrum of manufacturing and other domains. Moreover, new, electricity-based, communication technologies, including the telegraph, the telephone, and the radio, were becoming widely available. These technologies could create an analog representation of information and transmit that representation over long, wired, distances.

The third industrial revolution

By the end of the Second Industrial Revolution, and for the first time, the results of cognitive processes, manufacturing information, could be communicated automatically across ever increasing distances [6]. Domestic supply chains appeared and flourish. As international, wired communication became possible and reliable, foreign suppliers became members of those supply chains. However, the cognitive processes, wherever they were performed, needed to 1) create that information on one end of the wire and 2) analyze/ understand that information on the other end were still performed only by humans. Automating those cognitive processes would have to wait until the next revolution.

The Third Industrial Revolution was driven by both new, automated, mechanical-process technologies and the first generation of automated informational technologies (IT). These technologies, which included sensors, computers, hardware, and software, could automate some cognitive functions [7]. Sensors 1) collect analog signals from the real-world, 2) convert those analog signals into digital signals, and then 3) communicate those digital signals to either a locally connected or internet-connected computer. Software that is resident on that computer can transform those signals into “information”, analyze that information, and use that analysis to automate cognitive functions previously performed only by humans.

Towards the end of the third revolution, an explosion of new communications and sensor technologies enabled ubiquitous digital connectivity among intelligent devices, machines, sensors, and actuators. This connectivity, called the Industrial Internet of Things (IIoT), fundamentally changed the way we manufactured goods. A similar explosion of new data analytics technologies, especially, Artificial Intelligence (AI), enabled widespread access to cloud-based analytical services. The ubiquitous physical and informational connectivity expanded manufacturing to a global smart ecosystem, named smart manufacturing. Smart manufacturing has the capabilities to produce high quality products and to adapt quickly to changing conditions both inside and outside the factory. These new IT technologies, together with global connectivity, are providing the foundations for enhanced cognitive automation, the Fourth Industrial Revolution.

The fourth industrial revolution

The fourth industrial revolution, sometimes called Industry 4.0 (I4.0), is attempting to create a world in which virtual and physical systems of manufacturing cooperate with each other in a flexible way [8,9]. I4.0 is characterized by

1) An increased automation of new physical processes, such as Additive Manufacturing (AM), which are quite different from those the ones that happened in the Third Industrial Revolution

2) New ways of linking the physical and digital worlds through new cyber-physical systems (CPS), Internet of Things (IOT), and cloud computing, among other advanced information and cognitive technologies

3) A shift from a single, central, hierarchical, industrial, control system such as ISA95 [10] to a distributed industrial control architecture such as RAMI 4.0 [11]

4) New, sensors that collect multiple, image-based data types that require new data models and new control decisions based on a myriad of data analytics and AI cloud services

In the third revolution, the emphasis was on using simple sensors to collect enough numerical data to use physics-based models to automate the existing physical, manufacturing processes. Today, for most manufacturers, having enough data is no longer a problem. In fact, most have access to more data than humanly possible to process, analyze, and make decisions. The problem now is that the underlying physics associated with new I4.0 manufacturing technologies, such as AM, is not completely understood. Consequently, the major focus of I4.0, and this paper, is on using technologies such as data analytics, machine learning, and artificial intelligence to automate many of human-based, cognitive functions that were still dominant in the third revolution.

More on Cognitive Automation

In a very real sense, cognitive automation began in the Third Industrial Revolution. In its initial stages of inception, the automation of, formerly human only, cognitive functions began. It was based on the ability of a sensor to collect and communicate digital information to a computer that was wired directly to the sensor. At that time, there were only two different types of information: numbers and text; and the analysis was limited by the software on that computer. Over time, the sensing devices, the information types, the communication methods, and the analysis software got more sophisticated and commercially available. But only humans could make the required decisions whether they be design decisions or control decisions.

From our perspective, the fourth revolution began when some of those - formerly, human only - decisions were being made by software tools. Software tools that could use a wide range of new types of information being collected by new types of sensors. These new sensors could now collect images in a variety of “formats” that were based on the almost continuous stream of new, commercially available imaging technologies. Software tools that could analyze those different image formats quickly emerged. Software tools that were automating a completely new set of cognitive functions associated with those images.

In our view, the discussion in the last paragraph amounts to an evolution not a revolution. A natural, evolutionary path from the third revolution. The revolution is about using the results of those formerly automated cognitive functions as inputs to new types of automated, cognitive functions, decision-making functions. Two relatively new types of software tools - data analytics and AI– are trying to automate the functions involved in the decisionmaking process. Furthermore, these new tools frequently and simultaneously use results from former cognitive-automation tools.

The results from those various sensors must be “fused” before automating and communicating those fused results to those software tools. Each of these new, decision-making, cognitive automation tools can 1) input those results into the tool automatically, 2) make a prediction based on those inputs automatically, and 3) decides based on that prediction automatically. Sometimes only one such tool is needed to make that prediction. Often, however, multiple tools are needed. In the second case, tools must be “composed automatically before the final prediction can be made.

To make concrete what we mean by the different uses of the term “cognitive automation” we use an AM as an exemplar of all, new, emerging, I4.0-based, mechanical-process technologies.

Additive Manufacturing (AM): A Typical Mechanical Process of the Future

The 21st century has seen the proliferation of a new type of mechanical, manufacturing process: AM. The earliest AM equipment and material were developed in the 1980s for creating models and prototype parts [12]. AM has the potential to create almost

any geometry and any structure; its major benefits over traditional-manufacturing (TM) processes. Consequently, AM processes are exciting and AM parts have potential applications in many domains [13]. In fact, numerous market projections and technical papers have discussed the potential economic benefits of AM. The AM processes that build those geometries involve radically different materials, machines, methods, and sensors. In this paper, we focus on one of the most used AM process, powder bed fusion (PBF).

PBF machines build a part by adding and melting new powder in a layer-by-layer fashion; furthermore, each layer involves multiple, phase changes in the powder [14]. The usual chain results in powder to liquid to solid to liquid and then back to solid. This multi-physics chain produces highly complex process dynamics [13]. To ensure part quality in the face of these dynamics requires monitoring and controlling hundreds of AM process parameters simultaneously. For example, a typical, PBF process would use laser as a heat source to fuse the powder that is spread on the build plate (Figure 2). At each layer, the laser beam moves along its predefined path to form a thin layer of cross-sectional area of a part. Layer thickness determines the minimum resolution of a build. Melt-pool formation is one of the most important sub-processes of PBF. It involves complex physical phenomena such as thermal conduction, fluid dynamics, melting, and solidification [15].

Figure 2: Typical PBF machine, camera sensors, and melt-pool images [16,17].

Currently, nearly all commercial PBF machines build parts automatically. Once a process starts, it continues until the machine finishes the build using a pre-loaded toolpath. AM machines cannot recognize what is happening during a process and they cannot inform the operator. To control a process, the first step is to establish a comprehensive in-situ monitoring system. Figure 2 shows such monitoring system that was built in the Additive Manufacturing Metrology Testbed (AMMT) at NIST [16,17]. This testbed captures the in-situ data to monitor the melt-pool formation. The monitoring system in AMMT generates thousands of in-situ images every second using multiple sensors such as coaxial imager and layer wise imager.

The manufacturing industry is expected to benefit largely from the application of AM technology, with a whopping 33% market share by 2026 [8,9,10]. The reason is simple: AM parts offer significantly more complex geometries and structural properties with significantly shorter lead times compared to traditionally manufactured parts. Researchers believe that controlling the melt pool, based on different images, is an effective way to improve process control. Melt pool stability is directly related to the final quality of both part geometries and properties. Controlling melt pool successfully requires the execution of several cognitive functions. As noted above, the major emphasis of I4.0 is on automating those functions.

Cognitive automation in melt-pool-based process control

Melt-pool formation is one of the intermediate processes responsible for converting metal powder to the final part in PBF. Laser head, Galvo, and recoating blade work together to create desired melt pools. However, the process has many uncertainties that easily produce unexpected melt-pool-formation results [18,19]. Thus, monitoring sensor data and making control decisions quickly are extremely important. Melt pool images are typically noisy while melt-pool shape and temperature distribution are complicated. It is impossible for humans to process such information and make timely process setting changes once random melt pool defects happen.

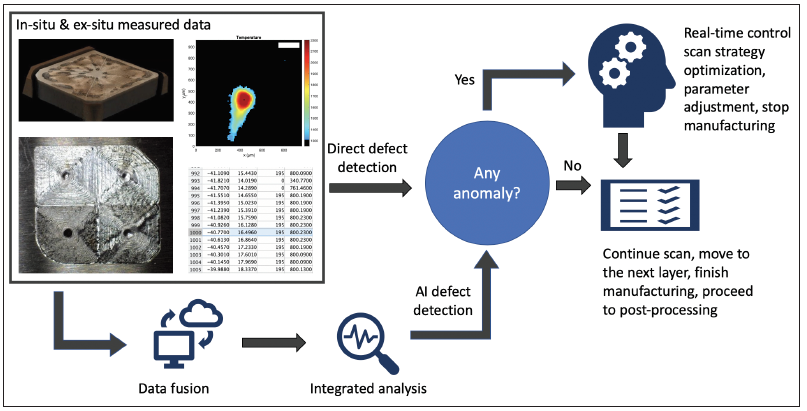

Neither can the traditional control techniques that use physicsbased, simple data-driven cognitive tools to solve the real-time melt pool monitoring and control problem. The emerging, cognitiveautomation functions/tools used to control melt-pool formation in AM processes are now based on multiple in-situ monitoring sensors, AI, and ex-situ measurement results. Figure 3 shows a general workflow to control the melt-pool formation process. It also shows other data types that can be collected during a build. Currently, five types of data are considered 1) preloaded process command, 2) encoder collected real position, 3) melt-pool coaxial image, and 4) layer wise image and 5) X-ray Computed Tomography (XCT) scan data.

Figure 3: General workflow of cognitive automation in melt-pool-based process control.

The workflow in Figure 3 captures several cognitive functions. The first function attempts to detect obvious defects, such as bad recoating, missing melt-pool, and failed layer exposure. The second cognitive automation (CA) function is called data fusion. It aligns and transforms the individual, sensor data into an integrated whole, and predicts process stability or part quality. For typical single point measurement data types, traditional fusion methods are still applicable. Fusing melt-pool images in time series, however, is significantly more difficult. For example, even if the individual images show no distinguishing defective features, the melt-pool formation could still be defective. This problem can be addressed using a CA tool called similarity analysis. Estimating melt-pool size is another cognitive function. Another cognitive function in the workflow is the scan strategy optimization performed after defects detected. The optimization function can be applied in near real time for layer wise feedforward control or offline for scan strategy design.

Using AI tools

In an NIST AMMT study, we use a pre-trained AI models, based on hundreds of previous experiments, to automate two cognitive functions: making accurate predictions of melt pool size and making optimized decisions for scan strategy. We trained a feedforward neural-network model from millions of digital commands and hundreds of thousand melt-pool coaxial images. The model uses pre-processed process variables to predict melt-pool size [17]. The AI predictive model significantly improves the accuracy and efficiency over the traditional power-speed model [17]. The same AI model was applied to AM process optimization to prevent overheating and lack of fusion defects [20].

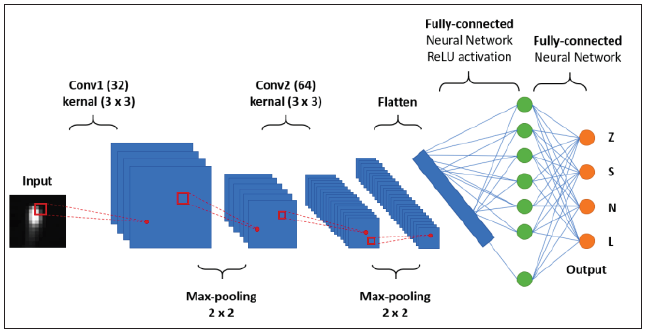

In the same case study, we developed a CNN model to detect anomalies in the melt-pool size [21]. From a melt-pool image, this model can recognize whether the melt-pool exists or not and is too big or too small. The PBF process controls several parameters to fabricate parts. There are three parameters that impact the melt pool size the most: laser power, scan speed, and moving path. Ideally, as the fabrication continues, the melt pools produced using these parameters have similar shapes, sizes, and intensity. However, due to the random uncertainties in the melting process, the melt-pool itself can be very unstable. An oversized melt-pool may indicate overheating in this area, while small melt pools are correlated to lack of fusion defects. Both conditions can lead to porosities, which can significantly reduce AM part quality.

The CNN model provides the machine with an ability to do realtime monitoring automatically. In this study, we produced 12 parts using different scan strategies in laser power, scan speed, and tool path. The melt-pool-monitoring, high-speed camera has collected over 1 million images to train the model. The final model can provide an accuracy of over 90%. We are working on embedding this model into AMMT. Once done, the machine will have the full ability for real-time monitoring and feedback control (Figure 4).

Figure 4: CNN architecture for melt-pool size classification [12].

Commercializing Cognitive Automation Tools

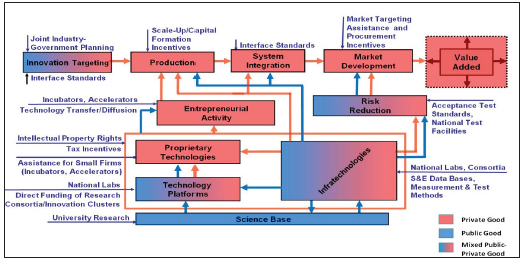

Figure 5: The technology life cycle.

The manufacturing industry is expected to benefit largely from the application of AM technology. As noted above, however, controlling the processes that make those parts require new, cognitive-automation methods and tools. Currently, those methods and tools are still in the research phase. To better understand what’s needed to traverse the path from research to commercialization see Figure 5, which focuses on a concept is found and discussed in [22,23].

Examples of infratechnologies frequently embodied in nonproduct standards include measurement and test methods, interface specifications, scientific and engineering databases, and artifacts such as standard reference materials. The majority of infratechnologies can be placed in one of four categories: Scientific and Engineering data, Measurement and Test methods, Interfaces, and Production Practices and Techniques. Scientific and Engineering Data is needed for conducting R&D, controlling production, and consummating market transactions. Measurement and Test Methods are essential for conducting R&D, monitoring production, and executing market transactions. Production Practices and Techniques include models for a better understanding of the causal relationships among production parameters, thereby allowing more efficient design and control of production processes. Interfaces are essential for efficient physical and functional integration of components of product and service systems. The majority of these infra technologies will eventually become standards to improve the efficiency of the both the underlying Science activities and the promulgation of their results across the entire technology life cycle.

The science of information

In our view, the science, and its associated activities, underpinning the commercialization of Cognitive Automation tools and other technologies is information. In [16], the author argues that the universe is made up of three distinct, but related components: matter, energy, and information. Each component has many physical and structural instantiations. Matter is an abstract quantity that has the capacity to represent physical reality. Energy is an abstract quantity that has the capacity to do work. In manufacturing, that work involves transforming matter from one form to another. In addition, matter has a science, called Material science; the main mathematical foundations for that science includes geometry and trigonometry. Energy also has a science, called Physical science or Physics, for short; the main mathematical foundations for that science includes calculus and thermodynamics. Mathematically, matter and energy are related through Einstein’s equation E=mc2.

According to [24], information is an abstract quantity that has the capacity to organize work. Since information is about organization, it is related to entropy. The mathematical relationship between information and entropy is i=e/h1/2. As discussed above, information also has many different physical and structural instantiations, which have changed over the course of the four industrial revolutions. Today, in the fourth revolutions, physical instantiation of information is in a digital form; the structural representation of the digital form varies considerably. For information to achieve its “capacity to organize work”, Stonier argues that a new science is needed. Presently, we briefly discuss two aspects of that science as it pertains to the automation of the cognitive work associated with AM. They are AI and hybrid modelling.

Artificial intelligence: Fundamental research is needed in training and evaluating the appropriateness of an AI model in a specific application domain [25]. The aspects of training data quality and quantity for AI and machine learning (AI/ML) models are largely ad hoc today [26]. Ideally, the data used in developing an AI model mirrors the real world as closely as we know how to do it today. Combinatorial coverage measurement concepts make it possible to quantify the gap (delta) between artificial and non-artificial, or between a training data set and the real world. EL and ITL teams will develop these input space measurement concepts further and design and conduct experiments for measurements and corroboration on the AMMT.

Even more important than training and performance aspects is the issue of assurance and trust of AI/ML systems. Currently, the strongest software verification and test methods are domainspecific and cannot be applied to neural networks and other AI methods. To deliver explainable AI models, it is essential to ensure that the encoding of training data into tensor features, neural network layers and neural nodes at each layer is well understood. In addition, if AI models are viewed as black boxes, then combinatorial software testing have shown the ability to verify the model accuracy for even extremely rare inputs. Customized experiments on AMMT will allow us to conduct unique research to further explore these phenomena and develop measurement science that can be generalized for instilling trust in other domain models [26,27].

Hybrid modeling: In AM, hybrid modeling is a major cognitive activity and there are three types. Hybrid data models (fusion), hybrid process models, (composition) and hybrid data-process models (integration) [28]. In this paper, we focus on fusion and composition. As noted above, fusing data is necessary in AM control because the data models include images, database schemas [29], XML schemas, and ontologies [30,31]. Currently, the mathematical foundations for developing science-based, fusion methodologies do not exist.

Because of the domain’s depth and complexity, applying AI in AM will require the composition of many localized process models across a range of scales, uncertainties [32,33] and abstraction barriers. There are physics-based models, data analytics models, and AI models. Again, the science-based methods for composing these different process models does not exist. Such a composition process [34], a rigorous semantic model of the AM build process at each scale, a classification of in-situ monitoring data [35,36], a data dictionary documenting AM data formats and exchange mechanisms, a formal framework that can be used for both for data fusion and model composition.

Summary

We are currently in the Fourth Industrial Revolution. The first three revolutions focused primarily on automating the physical processes associated with manufacturing. In the current revolution, the physical automation continues with a new type of manufacturing, AM. Nevertheless, the major focus is on cognitive automation. In this paper, we discussed one of main areas for cognitive automation, AM control. As an example, we discussed the control of laser PBF processes, which is based on new types of image sensors and use of AI. AI tools can be used to analyze the data from those sensors, make predictions about the melt pool size, and change process parameters if needed. To improve AM process control, these AI tools must be commercialized, which we discussed using the technology lifecycle proposed in [23]. Finally, we discussed the lack of she scientific foundations needed to facilitate that commercialization. In the future, we plan to develop those foundations.

References

- Xu, Min, Jeanne David M, and Suk Hi Kim (2018) The fourth industrial revolution: Opportunities and challenges. International Journal of Financial Research 9(2): 90-95.

- Lawrence B (2013) From hand to handle: the first industrial revolution. Oxford University Press, USA.

- Lu, Yan, Paul Witherell, Albert Jones (2020) Standard Connections for IIoT Empowered Smart Manufacturing. Manufacturing Letters 26: 17-20.

- Mokyr Joel (1998) The second industrial revolution, 1870-1914. Storia dell’economia Mondiale 21945.

- https://faculty.babson.edu/krollag/org_site/org_theory/barley_articles/hounshell_mass.html

- Rifkin Jeremy (2011) The third industrial revolution: how lateral power is transforming energy, the economy, and the world. Macmillan Publishers, USA.

- Greenwood Jeremy (1997) The third industrial revolution: Technology, productivity, and income inequality. No. 435. American Enterprise Institute.

- https://www.i-scoop.eu/industry-4-0/

- https://www.iberdrola.com/innovation/fourth-industrial-revolution

- https://www.isa.org/standards-and-publications/isa-standards/isa-standardscommittees/isa95

- https://list.ly/list/1RRA-reference-architectural-model-industrie-4-dot-0-rami-4-dot-0

- Frazier WE (2014) Metal additive manufacturing: A review. J of Material Eng and Perform 23: 1917-1928.

- https://engineeringproductdesign.com/knowledge-base/powder-bed-fusion/

- King Wayne E, Andrew T Anderson, Robert M Ferencz, Neil E Hodge, et al. (2015) Laser powder bed fusion additive manufacturing of metals; physics, computational, and materials challenges. Applied Physics Reviews 2(4): 041304.

- Hooper Paul A (2018) Melt pool temperature and cooling rates in laser powder bed fusion. Additive Manufacturing 22: 548-559.

- https://www.nist.gov/el/ammt-temps

- Yang Z, Lu Y, Yeung H, Krishnamurty S (2020) From scan strategy to melt pool prediction: A neighboring-effect modeling method. Journal of Computing and Information Science in Engineering 20(5).

- Lu, Yan, Zhuo Yang, Douglas Eddy, Sundar Krishnamurty (2018) Self-improving additive manufacturing knowledge management. International Design Engineering Technical Conferences and Computers and Information in Engineering Conference, American Society of Mechanical Engineers, 51739: V01BT02A016.

- Yan Wentao, Yan Lu, Kevontrez Jones, Zhuo Yang, Jason Fox, et al. (2020) Data-driven characterization of thermal models for powder-bed-fusion additive manufacturing. Additive Manufacturing 36: 101503.

- Yeung H, Yang Z, Yan L (2020) A meltpool prediction based scan strategy for powder bed fusion additive manufacturing. Additive Manufacturing 35: 101383.

- Yang Z, Lu Y, Ho Y, Krishnamurty S (2019) Investigation of deep learning for real-time melt pool classification for additive manufacturing. 2019 IEEE 15th Intl Conference on Automation Science and Engineering (CASE), IEEE, pp. 640-647.

- Tassey G (2020) Globalization and the high-tech policy response. Annals of Science and Technology Policy 4(3-4): 211-376.

- Tassey G (2017) The roles and impacts of technical standards on economic growth and implicatons for innovation policy. Annals of Science and Technology Policy 1(3): 215-316.

- Stonier T (1990) Information and the internal structure of the universe: An exploration into information physics. Springer, ISBN 978-1-4471-3265-3.

- Almuallim H, Dietterich TG (1919) Learning with many irrelevant features. AAAI 91: 547-552.

- Razvi S, Feng S, Narayanan A, Lee T, Witherell P (2019) A review of machine learning applications in additive manufacturing. ASME 2019 International Design Engineering Technical Conferences and Computers and Information in Engineering Conference (American Society of Mechanical Engineers Digital Collection).

- Petrich J, Gobert C, Phoha S, Nassar A, Reutzel E (2019) Machine learning for defect detection for PBFAM using high resolution layerwise imaging coupled with post-build CT scans. Proceedings of the 28th Annual International Solid Freeform Fabrication Symposium - An Additive Manufacturing Conference, pp. 1363-1381.

- Yang Zhuo, Douglas Eddy, Sundar Krishnamurty, Ian Grosse, Peter Denno, et al. (2017) Investigating grey-box modeling for predictive analytics in smart manufacturing. In International Design Engineering Technical Conferences and Computers and Information in Engineering Conference, American Society of Mechanical Engineers, 58134: V02BT03A024.

- Wisnesky Ryan, Spencer Breiner, Albert Jones, David I Spivak, Eswaran Subrahmanian (2017) Using category theory to facilitate multiple manufacturing service database integration. Journal of Computing and Information Science in Engineering 17(2): 021011.

- Roh, Byeong-Min, Soundar RT Kumara, Timothy W Simpson, Panagiotis Michaleris, et al. (2016) Ontology-based laser and thermal metamodels for metal-based additive manufacturing. In International Design Engineering Technical Conferences and Computers and Information in Engineering Conference, American Society of Mechanical Engineers, 50077: V01AT02A043.

- Ko H, Witherell P, Lu Y, Kim S, Rosen D (2020) Machine learning and knowledge-graph-based design rule construction for AM. Additive Manufacturing p. 101620.

- Moges T, Witherell P, Gaurav A (2020) On characterizing uncertainty sources in laser powder bed fusion additive manufacturing models. ASME International Mechanical Engineering Congress.

- Moges T, Wentao Y, Lin S, Gaurav A, Fox J, et al. (2018) Quantifying uncertainty in laser powder bed fusion additive manufacturing models and simulations. Proceedings of the 29th Annual International Solid Freeform Fabrication Symposium.

- Breiner S, Sriram R, Subrahmanian S (2019) Compositional models for complex systems. Academic Press, Massachusetts, USA.

- Feng S, Lu Y, Jones A (2020) Meta-Data for in-situ monitoring laser powder bed fusion processes. Proceedings of the ASME 2020 15th International Manufacturing Science and Engineering Conference, paper #: MSCE2020-8344.

- Feng S, Lu Y, Jones A (2020) Measured data alignment for monitoring metal additive processes using laser powder bed fusion methods. Proceedings of the ASME 2020 International Design Engineering Technical Conferences & Computers & Information in Engineering Conference, Paper #: IDETC2020-22478.

© 2020 Albert Jones. This is an open access article distributed under the terms of the Creative Commons Attribution License , which permits unrestricted use, distribution, and build upon your work non-commercially.

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

.jpg)

Editorial Board Registrations

Editorial Board Registrations Submit your Article

Submit your Article Refer a Friend

Refer a Friend Advertise With Us

Advertise With Us

.jpg)

.jpg)

.bmp)

.jpg)

.png)

.jpg)

.jpg)

.png)

.png)

.png)