- Submissions

Full Text

COJ Robotics & Artificial Intelligence

The Ternion Technology for Actionable Intelligence: Fog Computing, Robotic and AI

Nair B*

Department of Computer Engineering, India

*Corresponding author: Nair B, Department of Computer Engineering, India

Submission: November 08, 2020;Published: December 02, 2020

ISSN:2832-4463 Volume1 Issue2

Abstract

Ubiquitous computing generates valuable data on a large scale through internet-enabled devices. The data belongs to smart autonomous systems and the analytics of the data at different time scale help in quick and long-term decision making. The collaboration and analytics of data from various interdisciplinary autonomous systems can achieve greater insight into solving generic problems. The most promising combination of technology for enabling real-time and batch multi-disciplinary data analytics is fog computing, robotics, and artificial intelligence (AI). This paper discusses the prominence and role of each of these techniques using the use case of nCOv.

Introduction

We are in the era of sentient computing with sensors perceiving its ambiance for various applications ranging from monitoring and surveillance to actionable intelligence. The omnipresence of internet-enabled devices realizes ubiquitous data-centric networks generating large volumes of data in high velocity. This piling of raw data over a short period creates big data and is a source of valuable information. Efficient data analytics on big data gives insights that are useful for instantaneous, short-term, and long-term decision making. Big data analytics unveils information through rigorous processing and examination of data spanning over the lifetime of data. It is intricate data analytics extending from real-time data analytics to batch data analytics that provides the range of information as the data pattern evolves. An elaborate analysis of the collected data provides insight into various aspects of data like hidden patterns, data correlations, and other statistical parameters that enables informed decision making and predictability. Real-time big data analytics is sensitive to changes in data received as it involves online processing of data online as and when it is received. Real-time analysis of data provides actionable intelligence for quick decision making in dynamic event scenarios like industry, vehicular networks, business, health care, disaster management, defense, and others. Batch data analysis deal with the processing and comprehension of data collected as batches over a fixed time scale. The deep analytics of global data attains deeper, matured, and stable information and helps in long term decision making and planning.

The need of the hour is to have technology that can integrate and analyze voluminous data generated through various autonomous systems like smart cities, smart vehicular network, smart agriculture, smart grid, smart healthcare, and others. These systems are mostly cyber-physical systems or IoT based systems that integrate information and communication technology. Requirement based data analysis requires deep insights into data from multiple sources that require robust data integration, analysis, and collaboration. Real-time analytics, near real-time analytics, and batch analytics demand coordination of various technologies that can synchronize together to analyze data in real-time as wells as over a longer time duration. The integration and collaboration of data over an autonomous system for multi-purpose data analysis require a framework that will have to collect data from various autonomous systems, integrate, analyze data for multi-purpose actionable intelligence. For example, data generated from smart cities, smart health care systems, and IoT application for seismic monitoring system is also useful for several other purposes like the disaster management system. Interdisciplinary research requires the collaboration of multi-disciplinary data with different formats from varied sources for generating well-formed information for decision making. Analysis of data from such multiple autonomous systems requires a framework for collecting, processing, and analyzing fast streaming data generated to provide prompt and long-term decision making. The combination of fog computing, robotics, and artificial intelligence is a promising solution to engage multidisciplinary data from the different autonomous systems for deep data insights from a diversified perspective. Fog computing extends cloud-computing services at the edge of the network for latencysensitive applications for facilitating real-time data analytics. Cloud data center performs analytics on batches of data received from fog nodes for long term decision making. The interplay between the fog and cloud offers a balance between real-time and long-term batch data analytics. Artificial intelligence (AI) based complex algorithms in robotics deliver actionable intelligence through learning based on the data analytics performed on data at the fog nodes and cloud data center. Fog computing provides distributed data analytics, edge intelligence while, robotics with AI refines, realizes, and reinforces decisions in real life. Robotics and AI [1] have a diverse range of applications like marketing, banking, industry, agriculture, healthcare, defense, space exploration, autonomous vehicles, and others. Robots can be used in remote monitoring and under conditions non-conducive for human operations.

The Framework of the Ternion

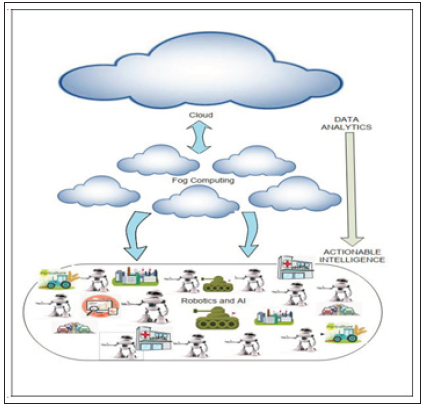

Figure 1: The framework of the ternion, fog computing, robotic and AI, for multi-disciplinary data analytics and decision making from data received from ubiquitous internet-enabled devices.

Figure 1 describes the coordination of fog computing, robotics, and AI [2] for decision making by analyzing data from multidiscipline systems generating data from ubiquitous devices. The internet-enabled devices of autonomous systems like smart vehicular networks, smart homes, etc., generate large volumes of data that are collected for analysis and processing by the fog nodes. Fog nodes perform online data analytics on the data received in real-time and forward the results to the robots for decision making. The data collected at the fog nodes are sent as batches to the cloud data center for batch data analytics. The cloud data center run descriptive, diagnostic, predictive, and prescriptive analytics on batch data received from fog nodes over a long period of time. The robots learn from the results of the data analytics projected by the data center through complex AI algorithms for better decision making. A rich interplay between fog computing, robotics [3] and AI help in making the decision for multiple problem scenarios from the data generated at various autonomous systems at different time scales.

Case Study: The Pandemic Coronavirus

This case study deals with the control and prevention of novel COVID-19 pandemic that has gripped the world the novel coronavirus, nCoV, was initially reported on 31 December 2019 and was declared as a pandemic by WHO on 11 March 2020. The cure or a vaccine for nCoV is unknown to date. Hence it is crucial to control and prevent the spread of the virus. The control and prevention of this highly contagious virus require deep insight into all forms of data that can be related to it. Several factors [4] contribute to the spread of nCoV. Several studies suggest the following parameters:

a. Climatological and Temperature: - The transmission of a similar coronavirus SARS CoV-2 is known to reduce in warm and humid temperatures.

b. The medium of transmission: - The transmission of nCoV causing a respiratory infection is through droplets and surfaces.

c. Geographical parameter

d. Mobility of human

e. Age, Sex, Co-morbidities in patients

f. Epidemiological data

g. Latency, incubation, infection rates and periods of nCov

h. Human-to-human contacts, animal-to-human contacts

i. Health parameters

j. Recovery and reinfection rate

k. Mutation of the virus and other factors.

An inter-disciplinary investigation may give deeper insights into other factors that contribute to evolving pandemic. The parameters to analyze the spread of nCoV include physiological/ health conditions, geographical, climate, social, economic, mobility, and several others. The availability of data for all these parameters, its collection, integration, and analysis will give a clear insight into the causes of the spread that helps in formulating preventive measures. Hence data from various sources have to be processed for short term and long-term decision making. The robots can be sources of data collection, as well as data processing and decision making. The collaboration of cloud data center, fog nodes, and the robots at the lowest layer can identify the pattern of spread through real-time analytics and batch analytics. In such conditions where social distancing needs to be maintained, robots can take actions based on the data analysis results from fog nodes and the cloud. Multiple data need to be combined to find the pattern of transmission for taking action where limited human intervention is possible. Fog computing, robotics, and AI pave the way for controlling and preventing the spread of pandemic through deep analysis of data. The robots can employ various AI algorithms for learning and informed decision making in states of emergency like the pandemic.

Conclusion

The coordination of fog computing, robotics, and AI helps in data analytics of multi-disciplinary data for actionable intelligence. The short-term analytics at the fog node aid in quick decision making while cloud data center provides long-term analytics. The data analytics at various levels of granularity provides a rightful insight on changing patterns of data. Ubiquitous computing generates an enormous amount of data. The strategic processing of data would give valuable information that provides for devising solutions, corrective measures, and increases predictability. To conclude data analytics at the various granularity and time scales help in the right decision making and provides a high level of predictability.

References

- Srivastava S (2020) Smart robots: The potential benefits of combining ai with robotics. Artificial Intelligence Latest News Robotics.

- Finnigan M (2017) Rise of the robots: From big data to artificial intelligence. Campden FB.

- https://goldberg.berkeley.edu/fog-robotics/

- Lin K, Fong DYT, Zhu B, Karlberg J (2006) Environmental factors on the SARS epidemic: Air temperature, passage of time and multiplicative effect of hospital infection. Epidemiol Infect 134: 223-230.

© 2020 Nair B. This is an open access article distributed under the terms of the Creative Commons Attribution License , which permits unrestricted use, distribution, and build upon your work non-commercially.

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

.jpg)

Editorial Board Registrations

Editorial Board Registrations Submit your Article

Submit your Article Refer a Friend

Refer a Friend Advertise With Us

Advertise With Us

.jpg)

.jpg)

.bmp)

.jpg)

.png)

.jpg)

.jpg)

.png)

.png)

.png)