- Submissions

Full Text

Biodiversity Online J

A Computational Approach for Species Abundance using Aerial Photography

Saurabh S* and Alok A

School of Computer Science and Engineering, University of Petroleum and Energy Studies, India

*Corresponding author:Saurabh Shanu, School of Computer Science and Engineering, University of Petroleum and Energy Studies, Dehradun 248007, Uttarakhand, India

Submission: June 28, 2022; Published: September 08, 2022

ISSN 2637-7082Volume4 Issue2

Opinion

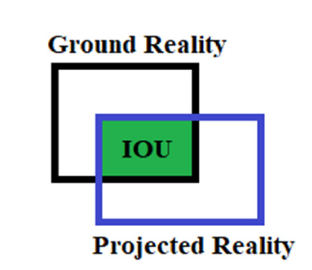

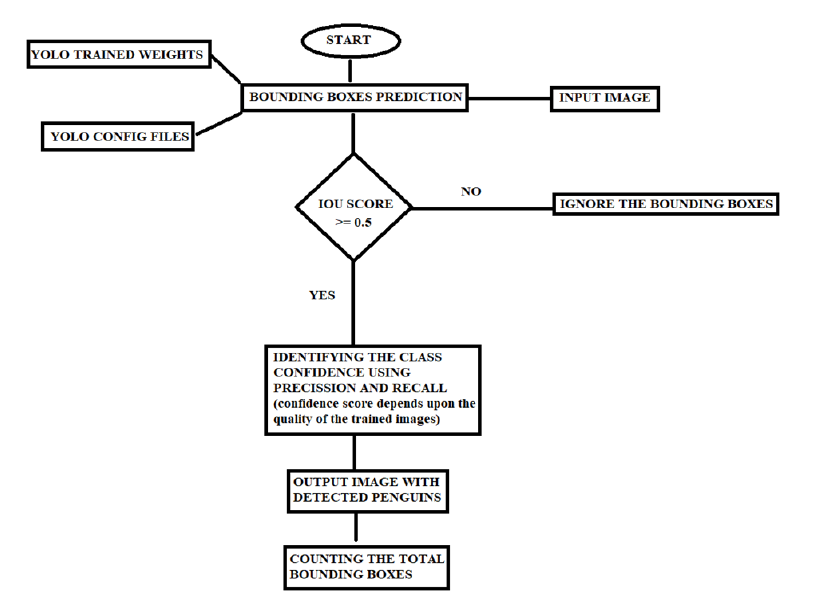

In this paper, we analyze and present a counting algorithm that counts the number of penguins in a focal landscape using deep learning models. Deep Learning models use a series of training datasets [1], which in this case are images f[x,y], as well as a set of hidden layers between the Artificial Neural Network’s input and output layers [2]. The task of counting penguins from aerial images require two major components. First, identifying penguins, and second, classifying the region of each penguin using bounding boxes for counting. In a broader sense, the role necessitates the completion of two major components: recognition and prediction based on previously trained weights and models [3]. We can infer from the preceding discussion that the workflow involves preparation, followed by recognition and bounding, classification, and finally counting the number of individuals in the population. The You Just Look Once (YOLO) models are used in the first part of training to detect objects in real time using state-of-the-art techniques. We use the YOLO model because in a real-time scenario, YOLO outperforms the common artificial neural networks for the purpose like Regions with Convolutional Neural Networks (RCNN) and SPP-net in terms of accuracy and pace [4]. Thus, faster processing for real time image analysis, and better accuracy [5]. We used YOLO trained weights and a config file on the training dataset images to establish bounding boxes around the penguins in the aerial photographs for the purposes of this paper. Manual annotations were performed first, and then feature extraction and penguin recognition were used to convert these images into training images. The Intersection of Union (IOU) is a metric for determining how accurate an object detector is on a given dataset [6]. As shown in Figure 1, IOU is a computational technique for computing Intersection over Union of two bounding boxes, one of which represents the ground reality and the other the projected reality. For the purposes of this model, the IOU value or IOU score has been set to 0.5. IOU=0.5 denotes a cutoff point for determining bounding boxes. All bounding boxes with IOU<0.5 were ignored, classifying the object detection as False Positive (FP), while those with IOU ≥0.5 were used to classify the object detection as True Positive (TP). However, there are a few instances where there are no penguins in the landscape, and our model predicts the absence of penguins in the landscape, a situation called as True Negative (TN). In certain cases, penguins are present in the landscape but the built model did not predict their existence, resulting in a Type II error known as False Negative (FN) [7]. The accuracy of the predictions made is critical for assessing the effects of any predictive modelling. In recent years, scholars have used Precision (P) and Recall (R) to measure the accuracy of predictive models [8], Figure 2 depicts the workflow from initial training to the production of a final count of penguins based on aerial photographs.

Figure 1:Intersection of union.

Figure 2:Flowchart of the work.

References

- Valdes G, Romero M, Interian Y, Solberg T (2020) PO-1527: when small is too small? training deep learning models in limited datasets. Radiotherapy And Oncology 152: S825.

- Walczak S, Cerpa N (2003) Neural Network.

- Sarkar D (2018) A comprehensive hands-on guide to transfer learning with real-world applications in deep learning. Medium.

- Brownlee J (2019) How to perform object detection with yolov3 in keras. Machine Learning Mastery.

- Wu J (2018) Complexity and accuracy analysis of common artificial neural networks on pedestrian detection. Matec Web Conf 232(3): 01003.

- Rosebrock A (2021) Intersection over union (Iou) for object detection. Pyimagesearch.

- Khandelwal R (2020) Evaluating performance of an object detection model. Medium.

- Beger A (2016) Precision-recall curves. Ssrn Electronic Journal.

© 2023 Saurabh S. This is an open access article distributed under the terms of the Creative Commons Attribution License , which permits unrestricted use, distribution, and build upon your work non-commercially.

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

.jpg)

Editorial Board Registrations

Editorial Board Registrations Submit your Article

Submit your Article Refer a Friend

Refer a Friend Advertise With Us

Advertise With Us

.jpg)

.jpg)

.bmp)

.jpg)

.png)

.jpg)

.jpg)

.png)

.png)

.png)