- Submissions

Full Text

Annals of Chemical Science Research

Pitfalls Associated with Translating Pulp and Paper Research Findings into Commercially Relevant Results as Illustrated with Case Studies

Peter W Hart*

Director: Research, Development and Innovation WestRock Richmond, USA

*Corresponding author: Peter W Hart, Director Research, Development and Innovation WestRock Richmond, USA

Submission: February 15, 2022;Published: February 23, 2022

Volume2 Issue5February, 2022

Abstract

Oftentimes there are misunderstandings or technical language barriers associated with questions and answers supplied across the research and commercial divide. If the researcher is not careful in how they define and conduct their laboratory experiments, a short answer problem such as “how will changing my chip distribution improve my yield,” can morph into a 2-year laboratory project that becomes of limited (if any) use to the pulp and paper mill. Another example is a question of “how do I improve the stiffness of my sheet while maintaining high bulk,” may result in a red herring that gives research a black eye, consumes 3-4 years of work, and produces no valuable results for the pulp and paper mill. These two case studies plus an example of improper data collection and understanding in a pulp and paper mill will be used as illustrations to discuss the importance of understanding how to design and report research studies while keeping the actual commercial facility in mind.

Introduction

Research in the pulp and paper industry comes in many forms. Some types of research will never have practical application but helps move along fundamental understanding. Such efforts as understanding why the rate of the β-O-4 bond cleavage is dependent on the type of aromatic nucleus [1] or computational studies of steric effects of bulky tethered aryl piperazines on reactivity [2] fall into this category. Other efforts appear to have somewhat immediate and relevant results which could be applied in a mill but upon closer inspection will have at best only niche application. Many of the biorefinery projects such as rice straw biorefineries [3] or reports on sustainable woody biomass biorefineries [4] fall into this category. These types of projects frequently fail to account for the equipment heavy requirements which drive up capital costs. In many cases, the specialty chemical price is used in calculations without considering the impact of transitioning from a specialty to a commodity pricing [5]. Many of these projects do not account for actual marketability. In some instances, one biorefinery would supply the entire world demand. In other cases, the total biorefinery production is so small relative to the market that distribution is a problem. No one is willing to actually collect and use the final products. Finally, most operating mills have no idea how to market, sell, or predict future sales for the multiple biorefinery products which must be generated and sold to make money making Wall Street guidance difficult and actual financial gain nearly impossible.

Some research efforts actually address relatively complex issues which negatively impact mill production, quality, or safety. In reality, mill operations and engineering personnel are required to focus on production output, detrimentally impacting their ability to digest research reports and their potential benefits to mill processes. Because of production pressures, they will rely on vendors or corporate technology specialists to uncover new work and explain to them how this will help their specific mills. Some examples of this type of research includes fundamental understanding of non-process element scale formation in bleach plants [6-10] and the modeling and understanding of hydrogen peroxide explosions in kraft bleach plants [11-14].

Many innovative research projects require some form of scale up from the benchtop to full scale production. Unfortunately, several scale up projects moving from the lab to full scale production fail [15,16]. There are several well-known difficulties associated with scaling up lab results to commercial scale applications. Channeling, mixing, mass and heat transfer issues, and concentration/recycled loops which can poison a process are all challenges which must be overcome. Typically, scale-up is accomplished in various steps in order to minimize the risks and identify potential problems with each jump. Ideally scale-up would proceed with processes going from test tube size to bench top size, to proof of concept, to pilot plant size before becoming commercial. Unfortunately, with today’s push for “rapid innovation” several of these steps are often eliminated as either too time consuming, too costly, or both that unfortunately usually occur at the expense of a robust, well run commercial plant or total project failure. All these issues can impact the successful implementation of a project.

The final type of research can most times be implemented at commercial scale directly from the lab without passing through the scale up process. These types of projects are usually performed at the request of mill supervision to solve specific mill issues. More frequent than not, these projects involve optimization, process improvement or cost reduction efforts. These projects can require some rather complex thinking in conjunction with a fairly deep understanding of the actual mill requesting the project such as how can the mill maximize energy production and maintain steam header pressure without venting low pressure steam to the atmosphere to minimize pressure swings in the header and reduce fuel usage and the mills carbon footprint [17]. Other requests simply involve questions that appear to be relatively straight forward but are most times difficult to answer such as “does the use of bleaching enzymes negatively impact fully bleached pulp yield?” [18]

Many times, a commercial scale operations manager will reach out to a technical or scientific person who may be a vendor, university professor, technical person from another mill or an internal R&D person, etc., within their circle of influence and ask a seemingly easy technical question. If that person has the facilities at their disposal, they may design a set of experiments and send the results with an answer or suggestion for changing operating procedures to address the underlying cause of the initial question. In a small but significant number of these cases, the implementation of these laboratory based operating changes will not result in the outcome expected from the laboratory studies. In some instances, these changes may actually result in decreased rather than improved process efficiency. Upon closer evaluation, it may be determined that the laboratory experiments that were performed were done correctly and produced consistent, reproducible results, but the experimental design itself did not faithfully reproduce the actual conditions employed in the commercial scale operation, and hence, the laboratory results may not translate at the commercial operations level. Projects of this nature lead to a distrust of R&D efforts at a mill level and make implementation and process improvement more difficult.

As discussed above, working on R&D projects with pulp and paper mill personnel can sometimes be difficult as important fundamental research is considered secondary at best and first principle understanding - which could have direct positive impact upon mill operations (and production output), can be too time consuming to understand and be applied. Additionally, there are several applied projects which can be accurately researched, but do not translate directly to a specific mill due to inherent differences between the lab and mill operating conditions. The current work attempts to demonstrate these types of pitfalls and disconnects associated with applying lab-based solutions to an operating pulp and paper mill using various case studies. Each case study represents a request from an operating mill to the R&D group for assistance with a specific problem. Often, the request was accompanied with suggestions of how to perform the study. In many instances, discrepancies between lab and mill operating conditions would have made the results erroneous for the mill if the study had been conducted as requested.

Case Studies

Chip thickness impact on yield

An interesting case study which demonstrates this research trap is the determination of optimal chip thickness to enhance pulp yield. The mill was installing a new chip handling and screening system and wanted to optimize the new system for yield by removing anything over 8mm in thickness from going to the digester. In current operation, the mill sends roughly 10% over thick chips to the digester.

The initial suggestion was to simply obtain the various chip fractions, i.e., pins and fines, 2 to 10 mm thick accepts, and over 10mm thick chips and cook them to determine yield on each fraction. Upon visiting the mill, it was determined that several other fractions existed. The mill was resizing over thick chips resulting in a specific type of chip fraction different from the others which contained a fair number of pins and fines and one that could be expected to increase in total volume if the mill started screening out 8mm thick chips as oversized rather than 10mm thick chips. There was also a processed knots stream being reintroduced into the higher kappa number target cook stream resulting in a total of 5 different fractions.

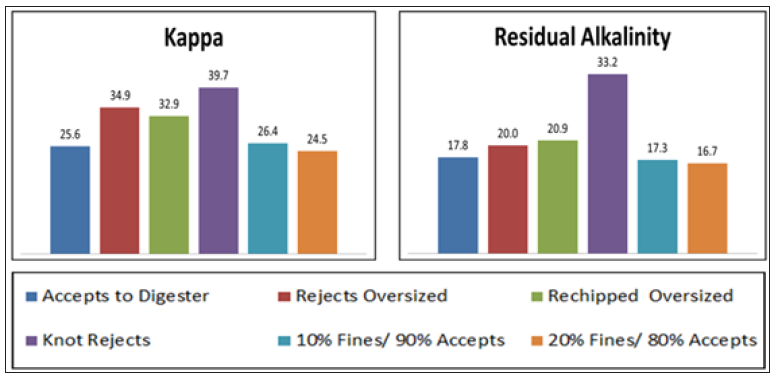

Upon cooking each fraction, it was determined that each specific fraction resulted in a different kappa number when cooked under standard mill conditions. Additionally, each fraction also consumed differing amounts of alkali. Thus, simply cooking each fraction alone could not be used to answer the mill question. In order to prove this point, a set of cooks was performed using hand prepared chips obtained by manually blending specific fractions to obtain a desired chip blend. In this case, chips with 10 and 20% fines with either 90 or 80% accept chips were cooked. The results showed a lower kappa number, lower residual alkali and lower yield for the higher fines content chips (Figure 1).

Figure 1:Kappa number and residual alkalinity for lab cooks performed 25% AA and 1114 H-Factor.

Typically, literature sources suggest that long thin chips pulp rapidly while the pulping of chips greater than 10mm typically result in increased shives which lowers screened pulp yield. These studies all assume a uniform chip size in the digester rather than a distribution. When using chips with a typical chipper supplied distribution, two things become clear: thickness is the principal dimension of concern in kraft pulping, and 2-8mm thick chips are ideal [19]. When dealing with chip distributions, over thick chips tend to drive the total alkali requirement for the cook in order to reduce shive content in the pulp and result in a lower screened yield [20].

Re-chipping over thick chips results in a substantial loss in pulp yield due to excessive fines generation. Thus, decreasing the size of the over thick portion of chips from 10mm less than or equal to 8mm without doing any other work to improve chipper size distribution will result in more chips being reprocessed and more fines production. In order to account for this increase in reprocessed chips, it was agreed that hand prepared lab digester chip charges would be used with increased numbers of re-chipped over thick chips and an over thick chip cutoff at 8mm. The resulting blends exhibited a higher screened yield than a current blend with 10mm chips being the over thick cutoff, thus successfully producing a positive ROI for the mill project.

One of the major difficulties with this project was the mill wanted a quick answer with just a few lab cooks being performed. The mill folks effectively designed a set of experiments which would not provide an answer to their question. As a result, several weeks of lab work were lost performing their testing and several more weeks were required to convince that mill that hand blended chip distributions would truly answer their question. A side benefit of performing the mill tests was an improved understanding of the impact of the various fractions on kappa number variability. As each fraction pulped differently, and in some cases quite differently than the average, the mill personnel began to understand that variations in specific streams such as the reprocessed knots stream could have a significant impact on kappa variability.

Impact of held digester on pulp kappa/quality

Another example of operations designing a set of experiments which would not produce results directly translatable from the lab to the mill focuses on held batch digesters. One mill was having difficulties meeting their batch digester blow schedule. Some digesters were not at the required H factor when it was time to blow and as a result, the digester would have the steam shut off and be held as is until it came back around in the blow schedule sequence when the digester would be brought up to blow pressure with steam and be blown on the second time around schedule. Unfortunately, this results in the chips/pulp and liquor being held near cooking temperature for a significant amount of time; the time required to reach the blow time resulting in overcooked low kappa pulp being blown into the blow tank.

The mill requested a research study using an electrically heated lab digester known as M&K which is characterized by a forced circulation loop. The suggested study was to simply overcook the digester by an entire blow cycle and compare the resulting pulp to a control pulp cooked in the same manner as the mill. Unfortunately, performing the testing in this manner would have given erroneous results because the forced circulation of heated liquor would not have simulated the held digester conditions experienced in the mill setting. Rather, it was suggested the time and temperature curve of several held digesters could be monitored to determine the total H factor accumulated by the held cooks. Using previous work which determined the relationship between pulp quality, kappa number and H factor, it would be easy to estimate the resulting kappa number of the held digester pulp.

This project is another example where the mill approached research with a specific problem which, when answered, could lead to potentially changing current operating conditions. Some of the mill folks also had a specific idea of how to answer that problem. Unfortunately, such a suggestion would not have led to a solution which would have translated directly from the lab to the mill. Instead of performing the mill request, an alternative approach had to be developed and presented to the mill for approval. These alternative approval processes can be difficult because the R&D folks are effectively telling the mill that their solution has shortcomings and an alternate method needs to be employed. In some instances, it is difficult to obtain buy-in for the proposed solution because specific mill personnel are committed to the original solution, they proposed in the first place.

Uncooked wood in paperboard

In a similar vein to the problem with changing chip size distribution, a mill reached out to R&D to help them solve a problem with a significant amount of uncooked wood being found in the board being produced on the paper machine. Samples were obtained and analyzed using various analytical and microscopic techniques to prove the problem was indeed uncooked wood. Next, an inventory of core plugs from repulped broke rolls was conducted because experience has shown that some uncooked wood problems originate from repulping of core plugs instead of removing them prior to pushing rolls into the re-pulper. Finally, the pulp in the high-density storage chests was evaluated. As uncooked wood was found in the high-density pulp chests, the uncooked wood was determined to be a pulp mill issue and focus was shifted from the paper machines to the pulp mill.

Examination of the blow line pulp also showed the presence of uncooked wood in both the batch and continuous digester lines. Following the process further upstream, it was found that the mill had recently rebuilt their chipper and had skewed the chip distribution to a slightly thicker chip to increase throughput. The mill had set several chipper production records and had reported to senior management the glowing success of the chipper rebuild job.

Chip samples were collected and cooked in a lab digester using simulated mill cooking conditions. Uncooked wood was found to be present in the resulting pulp. The root cause of the wood in the sheet was determined to be the new chip distribution being produced by the chipper was too thick, thus cooking liquor was not penetrating into the center of the over thick chips resulting in uncooked cores. The obvious solution was to adjust the chipper to make a thinner chip.

For obvious reasons, the mill felt uncomfortable at best with this solution because it would mean the successful chipper rebuild which had been glowingly reported to management would not in fact be able to reach the production levels already reported. Thus, they wanted an alternative solution. After evaluating chip thickness screening and associated over-thick slicing or crushing, it was determined the only solution was to modify the chip distribution at the chipper and by doing so, the uncooked wood problem went away.

Sheet yellowing within the roll

The same grade of board was being made on two different paper machines within one mill. Board from both machines was being sent to a specific customer. Several complaints were received about board from PM B not meeting the L*a*b color specifications. The sheet was too yellow. Immediately, the mill implemented a task force to determine the root cause of this issue and correct it. A review of the testing and data logs supported that all the rolls in question had met specifications when they were released for sale by the machine. An inspection of rolls stored in various warehouses determined the rolls from PM B slowly yellowed over a two-week period while in storage, whereas the rolls from PM A did not yellow.

Not surprisingly, the papermakers initially suspected the pulp until it was pointed out that the same pulp pulled from the same bleached high density storage tank was being used on both machines and yellowing was only being experienced on one of the machines. The ISO manufacturing guidelines for the grade in question were obtained and the written documentation from the machine runs of both PM A and PM B were compared to the manufacturing guides. According to the documentation, both machines were following proper procedures and were both nearly identical. Next, an evaluation of each chemical being used by both machines was performed. Both machines were using the same chemicals from the same suppliers and, according to the documentation, in about the same amounts. Next, lab tests were performed with each individual chemical and accelerated drying methods were used to determine which chemicals had the potential to yellow the sheet. Under the extreme lab testing, almost all the chemicals had the potential to yellow the sheet to some extent. A couple of chemicals however, exhibited significant levels of yellowing. An audit of these chemicals was performed comparing the inventory, purchased material and amount of chemical used by the machines. The audit showed that a significant amount of a water box lubricant chemical was missing from inventory and had presumably been consumed by the paper machines.

During the next run of the grade on PM B, the use of this chemical was monitored, the operator performance was carefully evaluated. It was determined the operators on PM B were adding twice the amount of the water box lubricant as the manufacturing guidelines called for. However, in the ISO documentation for the run, they were recording the specified amount of chemical, not the amount they had actually used. When questioned about the discrepancy between the usage and the recorded amount, some operators stressed that this was an ISO grade and they had been drilled that the manufacturing specifications had to be used and recorded. Basically, the operators did not understand they were actually supposed to run the machine within the manufacturing guidelines. Rather, all they had to do was run the machine the way they thought was best and record results within the guidelines. We therefore determined the operators needed to be retrained in ISO procedures and manufacturing practice and the yellowing problem was solved.

Optimization efforts employing mill data

Often when working with a mill on optimization or new product trials, longer term operating data must be used. These data sets are obtained from either routine operator testing or in some cases, from shorter term (a few days to about a month) of specialized testing added to the standard operator testing routine. When specialized testing is being done, it is important to train each tester on every shift how to perform the testing and to explain why the tests are important in order to get buy-in for the extra work. It is also important to routinely check on the testers techniques to ensure proper lab procedures are being followed. During one set of special month-long testing for an optimization project, a titration was required as part of the evaluation.

After about 10 days of testing, the results were looking promising, so it was decided to leave the mill for a week to pursue other projects while the operators continued to test the product. Upon returning to the mill, the optimization project had been abandoned because the data started to show great variability. Upon inspection, the data collected within the last week was actually found to be quite variable and did somewhat justify aborting the project. Discussions with the testers indicated they were still using the proper testing method. The correct testing solutions of the proper strength had been employed for the entire time testing was being performed. The proper indicator had been used as well. It was not possible to watch the testers perform the test as the equipment for the special test had been removed from the lab when the project was abandoned.

During one tester conversation, there was a random comment about using an eye dropper for the titration. After several questions, it was revealed that shortly after the R&D group left, the burette being used for the titration had been broken. One of the testers determined that 1 full eye dropper plus 24 drops was about equivalent to the volume of material they had been using from the burette so the testing was continued using the eye dropper instead of the burette. If the end point was obtained with more or less than 24 drops, the testers were ratioing the 24 drops to the typical volume used during titrations to obtain the reported results. The results of the testing were erratic which suggested the optimization trial was not working as designed and the trial was terminated by the mill. The trial was then deemed by the mill to be a research failure.

In both the yellowing board example and the optimization trial just discussed, operations were producing and recording erroneous data which had detrimental effects upon mill operations. All the operators involved thought they were doing the right thing to maintain production and quality. Unfortunately, these data sets were used during research efforts to solve specific mill problems leading to an aborted trial and a significant time lag in solving the associated customer complaint. When working with mill data, we came to the conclusion that it is important to view it with some level of skepticism.

Recausticizing optimization: A calibration issue

A mill was experiencing problems with their lime kiln and recausticizing area. A new mill engineer was assigned to increase the causticizing efficiency and to improve the kiln operation. The engineer was told to lean on the experience of the R&D group for assistance. Various unit operations were evaluated, and it was determined the lime mud going to the kiln was over washed containing lower than expected solids. While attempting to determine the cause of the lower mud solids discharge, operators stated it occurred when a significant change was made in the mass flow to the CD filters due to a recalibration of the specific gravity meter. Manual testing was performed and the mud flow equation in the DCS was altered to match the manual testing numbers. The operators took several samples over time and each sample correlated with the equation which had been replaced.

One specific operator routinely obtained different answers than the rest, but he took longer to test the sample than the other operators. When reviewing his method, it was found that the graduated cylinder used by the operators in the testing room had broken shortly before the specific gravity recalibration had occurred. He did not like the fact that specific gravity values appeared to abruptly change with the new cylinder, so he kept the broken one in his locker (against all safety regulations) and used that for his testing. Following this lead, a closer look was taken at the current graduated cylinder. It was found the new glass cylinder gradient measurements were off by as much as 5mL resulting in up to a 7% change in the reported specific gravity [21].

The graduated cylinder was taken directly from the storeroom from a box of new cylinders. It was of the same type typically used in general laboratories everywhere. The calibrations marked on the cylinder were simply wrong. As a result of trusting the equipment, significant process control changes were performed in the instrumentation resulting in operational problems and increased operating costs occurring over several months. It is important to verify even standard, routinely used pieces of equipment before making major process changes.

How to lower SBS density while maintaining stiffness: A red herring research failure

Two mills were producing the same grades of SBS. One mill produced board with a significantly higher density than the other mill. An R&D project was initiated to determine how to maintain or enhance stiffness at a lower basis weight. This project was started during a budget curtailment and assigned to a scientist who did not like to leave the lab and who had never visited the mill being studied. After several calls with the two mills, chips were sent to the lab and cooks were made. The resulting pulp was bleached and formed into hand sheets to determine if differences in the local wood basket were responsible for the density differences. All available forms of fiber measurements were made. Differences in the bleaching sequences between the mills were also evaluated in the lab. Lab produced pulps did not appear to produce significant differences in the bulk/stiffness ratio.

Next, the mills collected pulp samples from their bleached highdensity chests and sent the pulp to the lab. Measurements of the mill produced pulps were made and compared to the lab pulp. It was determined that fiber kink and curl of the fully bleached mill pulp was considerably higher than both the lab produced pulp and the fully bleached pulp from the other mill. Since the literature suggests that kink and curl can have a large impact upon bulk and other fiber properties [22,23], it was decided to perform an in-depth analysis on kink formation throughout the mill. Mill personnel were asked to collect fiber samples after every single piece of pulp processing equipment. After some compromise, it was agreed to collect samples after brown stock washing, after screening, off the decker mat, from the pump feeding the bleach plant, after each stage of bleach washing and from the pulp going leaving the bleached high density storage chests going to the paper machines. Mill personnel collected these samples once a month for a year and sent the samples to the lab for kink measurements.

Over the course of the year, several articles were obtained that discussed the impact of kink and curl on fiber physical properties. The pulp measurements were consistently showing a large increase in fiber kink between the decker mat and the pulp leaving the bleach plant feed MC pump. Progress reports were submitted which stated the mill density issue could potentially be solved by reducing or eliminating the kink imparted by this one MC pump. Literature work suggested that mechanical action at consistencies greater than 10% can permanently increase fiber curl and kink in medium to low yield chemical pulps [24].

Meanwhile, the mill was being strongly encouraged to solve their density problem, so the mill embraced the research effort and asked for a potential solution. The research answer supplied was if they could operate the bleach plant MC feed pump at the 7-9% consistency range, they would not impart the large amount of kink into the fiber and would not lose bulk in the resulting bleached board. Another research scientist familiar with the mill was asked to go to the mill while the trial was being performed and record data. The trial was an utter failure. The MC pump did not perform well at the lower consistencies and no density reduction was realized on the paper machine.

Pulp samples were collected and brought back to the lab. In addition, an extra sample was collected, which was a blow line sample from the continuous digester. When these samples were analyzed, it was determined that lowering the consistency of the MC pump did indeed reduce the kink by a substantial amount even though the expected result of density improvement was not realized. When the blow line sample was tested, it was found to have substantially higher kink than any other sample tested in the entire mill. Effectively, the fibers were severely kinked either in the digester or blow line and either straightened or re-kinked as they passed through different unit operations. Reducing the kink in a specific spot in the process had no impact on the final results since the fiber damage was already done and could not be repaired.

By relying on certain mill personnel instead of actually visiting the mill, limitations were placed upon the sampling program. When first asked for a blow line sample, the scientist was told that no sample point was available because it was actually quite difficult to get to and even more difficult to safely sample. Basically, some of the mill personnel did not want to collect it. Due to never having been to the mill, the scientist in charge was not able to challenge this statement so the blow line was not included in the original sampling program. Also, by not being familiar with mill operations, the idea that one MC pump located at the beginning of the bleach plant was causing all the mill density issues was not particularly reasonable and the idea that an MC pump should be continually operated at 7-8% consistency was unreasonable. When working on mill-based problems, it is important to understand the actual mill and how it operates before planning to make major changes to its operational structure.

Conclusion

In this work UV-VIS spectroscopy was performed to calculate the Urbach energies and indirect band gap energies of two A4 white copy paper samples. The indirect band gap energy involved interaction of photon with an electron in the valence band which subsequently got excited into the conduction band, across the fundamental band gap. In the case of the indirect transition, interaction with lattice vibration also took place. Thus, the appearance of optical band gap in thin A4 copy paper samples was affected by the microstructure, like the presence of defects. Urbach energy tailing was estimated into the band gap in each paper sample. Urbach energy tail (Eu) has been estimated to account for the optical disorder of the two paper samples [17,18]. The width of Urbach tail decreased on going from the thicker to the thinner copy paper sample which meant that from order to disorder. Thus, the present work contributes to understanding that the energy tails can be optimized to engineer the optical band gap for various applications in the field of thin film devices, and therefore of thin copy paper samples.

There are multiple potential pitfalls associated with performing various forms of development, optimization and cost reduction projects (often referred to as research project by mill personnel) for operating pulp & paper mills. Several of the possible difficulties reported here have been presented through a series of seven case studies. Several of these issues may be resolved through open, transparent discourse between the mill and the research scientist/ engineer. Others require the researcher have a fairly deep level of understanding of the specific mill operation or at least have a trusted colleague at the mill with whom to work through specific process issues. Some issues involve maintaining data integrity.

One pitfall is when the mill asks for help and has a specific idea of how they think the project should be performed. In some cases, the mill approach would not accurately develop results directly transferable to the mill. Also, due to production pressure the mill wants the answer almost before they ask the question, so lengthy lab efforts are not welcome. When working with the mill it is imperative to have a candid discussion of the actual experimental plan and the expected timeline for completion up front and develop buy-in for the project. At this point, the scientist/engineer must ensure the agreed upon plan will provide the desired information.

Another issue involves mill politics. If the research results point to a solution the mill does not like for various reasons (see rebuilt chipper example in Uncooked Wood in Paperboard section above), the solution may not be implemented, the mill will continue to state that R&D did not successfully solve the problem. Often by attempting to work through alternative solutions with mill personnel, they will decide the proposed (mostly correct) solution should be quietly implemented.

Frequently, these types of projects will require the use of mill generated data. Before using any mill generated data set, the data should be carefully examined for potential outliers, strange series of data, or an unmoving series of data among other issues. Careful examination is required to ensure quality data are being used in your analysis.

Similar to the data quality issue, it is also important to be sure critical pieces of equipment are properly calibrated prior to using the equipment for drastic process changes. Even routinely purchased equipment such as a graduated cylinder can have significant and expensive ramifications if its calibration is not verified (refer to Re-causticizing Optimization: A Calibration Issue).

Finally, it is of critical importance to work directly with a mill and actually understand the specific mill processes before attempting to alter the entire operation and product quality of the mill. A significant amount of time can be lost chasing red herrings just because a few key samples were either not taken or improperly taken and literature suggests a potential issue.

Implementing projects at a mill and enhancing both their operability and profitability can provide a rewarding and satisfying career. However, even after years of working with mills, this report has found there can be significant “fishing expeditions” when dealing with mill upsets which must be identified to avoid extensive research programs to solve short term issues or dealing with red herrings and thereby maintain a successful track record.

References

- Shimizu S, Yokoyama T, Matsumot Y (2015) “Why is the rate of the beta-O-4 bond cleavage dependent on the type of aromatic nucleus in the delignification during alkaline pulping process?” 18th International Symposium on Wood, Fibre and Pulp Chemistry (ISWFPC), Vienna, Austria.

- Key RE, Elder T, Bozell JJ (2019) Steric effects of bulky tethered aryl piperazines on the reactivity of Co-Schiff base oxidation catalysts-a synthetic and computational study. Tetrahedron 75(23): 3118-3127.

- Abraham A, Mathew AK, Sindhu R, Pandey A, Binod P (2016) Potential of rice straw for bio-refining: An overview. Bioresource Technology 215: 29-36.

- Liu S, Lu H, Hu R, Shupe A, Lin L, et al. (2012) A sustainable woody biomass biorefinery. Biotechnology Advances 30(4): 785-810.

- Hart PW, Sommerfeld JT (1997) Cost estimation of specialty chemicals from laboratory-scale prices. Cost Engineering 39(3): 31-35.

- Litvay C, Rudie A, Hart PW (2003) Use of excel ion exchange equilibrium solver with Win GEMS to model and predict NPE distribution in the MeadWestvaco evadale, TX Hardwood Bleach Plant. TAPPI 2003 Fall Conference, Chicago, IL, USA.

- Rudie AW, Hart PW (2005) Formation and prevention of calcium oxalate scale in the bleach plant. Solutions! for People, Processes and Paper 88(6): 45-46.

- Hart PW, Rudie AW (2006) Mineral scale management part I-case studies. TAPPI J 5(6): 22-27.

- Rudie AW, Hart PW (2006) Mineral scale management part II-fundamental chemistry. TAPPI J 5(7): 17-23.

- Rudie AW, Hart PW (2006) Mineral scale management part III-non-process elements in paper industry. TAPPI J 5(8): 3-9.

- Hart PW, Rudie AW (2010) Comparative evaluation of peroxide explosion hazards in chemical and mechanical pulp bleaching systems. Pulp & Paper Canada 111(4): T60-T63.

- Hart PW, Rudie AW (2011) Modeling an explosion: The devil is in the details. Chemical Engineering Education 45(1): 15-20.

- Hart PW, Houtman C, Hirth K (2013) Hydrogen peroxide and caustic soda: Dancing with a dragon while bleaching. TAPPI J 12(7): 59-65.

- Rudie AW, Hart PW (2018) Understanding the risks and rewards of using 50% versus 10% strength peroxide in pulp bleach plants. TAPPI J 17(11): 601-607.

- Hart PW, Gilboe MM, Adusei G, Mancosky D, Armstad DA (2005) Pilot scale trials of a low consistency oxygen delignification system performed with a hydro dynamics shockwave power reactor. TAPPI J 4(1): 26-31.

- Banerjee S, Le T, Hart PW (2012) Scale inhibition and removal in continuous pulp digesters. Industrial and Engineering Chemistry Research 51(7): 10283-10286.

- Santos RB, Hart PW (2020) Case study: Paper mill power plant optimization-balancing steam venting with mill demand. Tappi J 19(6): 317-321.

- Hart PW, Sharp III HF (2005) Statistical determination of the impact of enzymes on bleached pulp yield. TAPPI J 4(8): 3-6.

- Grace TM, Leopold B, Malcolm EW (1989) Process variables, in alkaline pulping. In: Grace TM, Leopold B, Malcolm EW (Eds.), (3rd edn), Joint Textbook Committee of the Paper Industry, CPPA-TAPPI, Montreal/Atlanta, Pulp & Paper Manufacture Series 1989, Chapter 5, 5: 90-96.

- Rajesh KS, Singaravel M, Subrahmanyam SV (2010) Chip size distribution-A lot can happen over its variation. IPPTA J 22(3): 93-96.

- Nesselrodt K, Lee S, Andrews JD, Hart PW (2015) Mill study on improving lime kiln efficiency. Tappi J 14(2): 133-139.

- Joutsimo O, Wathén R, Tamminen T (2005) Effects of fiber deformations on pulp sheet properties and fiber strength. Paperi Ja Puu 87(6): 392-397.

- Sutton P, Joss C, Crossely B (2000) Factors affecting fiber characteristics in pulp. TAPPI Pulping Process and Product Quality Conference Proceedings.

- Page DH, Seth RS, Barbe M, Jordan B (1985) Curls, crimps, kinks and micro-compressions in pulp fibres-their origin, measurement and significance. Papermaking Raw Materials, Transactions of the 8th Fundamental Research Symposium, Oxford, pp. 183-227.

© 2022 Peter W Hartu. This is an open access article distributed under the terms of the Creative Commons Attribution License , which permits unrestricted use, distribution, and build upon your work non-commercially.

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

a Creative Commons Attribution 4.0 International License. Based on a work at www.crimsonpublishers.com.

Best viewed in

.jpg)

Editorial Board Registrations

Editorial Board Registrations Submit your Article

Submit your Article Refer a Friend

Refer a Friend Advertise With Us

Advertise With Us

.jpg)

.jpg)

.bmp)

.jpg)

.png)

.jpg)

.jpg)

.png)

.png)

.png)